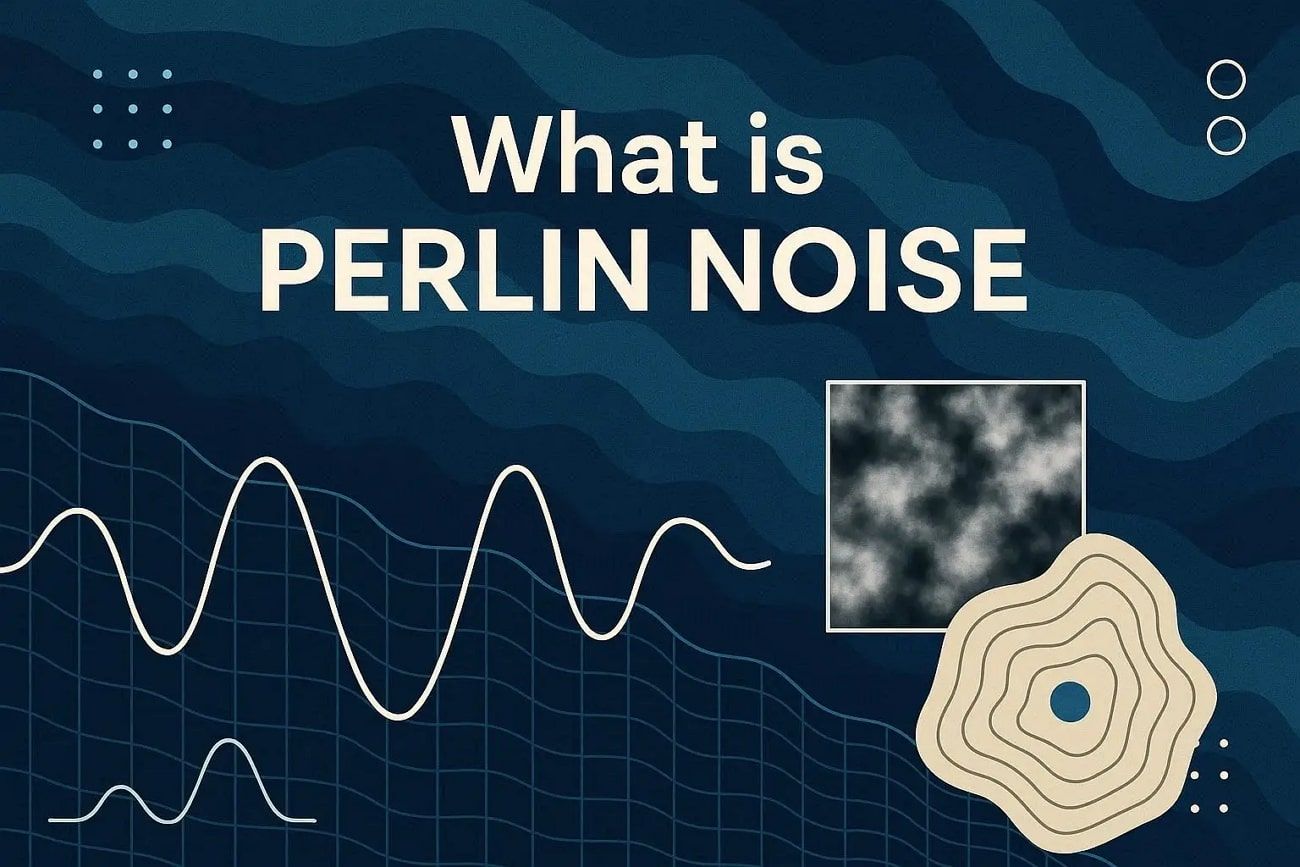

What Is Perlin Noise? Definition, Principles, and Workflow

Imagine a digital world where clouds drift, rivers flow, and mountains rise without looking artificial. Perlin noise makes this possible by creating smooth, natural patterns that do not repeat.

Understanding perlin noise definition, principles, and workflow enables you to shape digital landscapes and effects in ways that feel natural. This guide explains what Perlin Noise is, how it works, its key concepts, and a stepwise workflow.

Part 1. What Is Perlin Noise?

Perlin noise is a type of gradient noise created by Ken Perlin in 1983. It was first used in the film Tron to make computer graphics look more natural and less perfect or plastic. Unlike conventional random noise, which resembles TV static, Perlin noise produces smooth, continuous patterns. This makes it ideal for creating natural effects in computer graphics.

Main Features

This gradient noise has special features that make it helpful for artists and developers.

- Coherent Randomness: Values near each other are related, so patterns appear smooth instead of scattered or noisy.

- Gradient-Based Construction: A grid of random gradient directions interacts with positions; then, smooth blending creates natural patterns.

- Multi-Octave Structure: Multiple layers of Perlin noise combine at different sizes and strengths, controlling big shapes and small details.

- Dimensional Flexibility: Works in 1D, 2D, 3D, or more, so it can create textures, volumes, or changing effects over time.

- Repeatability: Using the same input and seed always gives the same output, which is useful for consistent procedural results.

Common Categories and Variants

Perlin Noise, or Noise Perlin, usually refers to the basic algorithm, but it often comes in different types or versions.

By Dimension

- 1D Noise: Used for simple changes over time, such as animation curves or time-based effects.

- 2D Noise: Commonly used for textures, height maps, and patterns that appear on flat surfaces.

- 3D/4D Noise: Applied to the volumetric impacts like clouds or smoke, and effects that change across space and time.

By Octave/Composition Style

- Basic Perlin Noise: Single layer of noise, smooth and straightforward in structure.

- Fractal Brownian Motion: Combines multiple layers of Perlin Noise with different sizes and strengths to add detail.

- Billow Noise: Uses the absolute value of noise to create soft, rounded shapes like clouds or gentle hills.

- Ridged Noise: Converts noise into sharp ridges, ideal for mountains and rough surfaces.

Part 2. History and Evolution of Perlin Noise in 3D Graphics and Animation

The history of Perlin noise shows how a need led to an invention. It helped computer graphics change from rigid, plastic shapes to more natural, real-world looks.

Origins

- Inventor: Ken Perlin, an American computer graphics researcher and professor.

- Time: Early 1980s.

In 1983, Ken Perlin worked on the movie Tron, where the effects required realistic textures such as marble, wood, clouds, and fire, and normal random noise made harsh, unnatural patterns. Hence, Perlin created a noise function that gave smooth, natural results.

Early Development

1982–1983 (Developed then, published 1985): Perlin introduced the original Perlin Noise algorithm.

Concept: Instead of using random values at each point, he used pseudo-random gradient vectors on a grid and interpolated values smoothly between points.

First Major Use

- In the Film Industry, Tron (1982) had some early experimentation with noise-based textures.

- Star Wars: Episode I - The Phantom Menace (1999) and later movies widely used Perlin Noise for CGI textures and particle effects.

This invention enabled artists and programmers to create realistic, natural textures automatically, so they did not need hand-drawn textures.

Improvements and Variations

After the original 1985 version, Perlin noise evolved, and the given points explained how:

- Improved Perlin Noise (2002): Ken Perlin released an improved version to remove visual errors and reduce directional bias. It uses simpler gradient selection and better interpolation.

- Simplex Noise (2001–2002): It was also developed by Ken Perlin, and Simplex Noise works faster and cleaner in higher dimensions. It reduces computation load and minimizes visual artifacts while keeping realistic gradient-based patterns intact.

- Extensions: Multi-octave or fractal noise uses several Perlin noise layers at different sizes to add texture detail. Today, it helps create game terrains, clouds, smoke, fire, and even sound effects.

Part 3. How Perlin Noise works

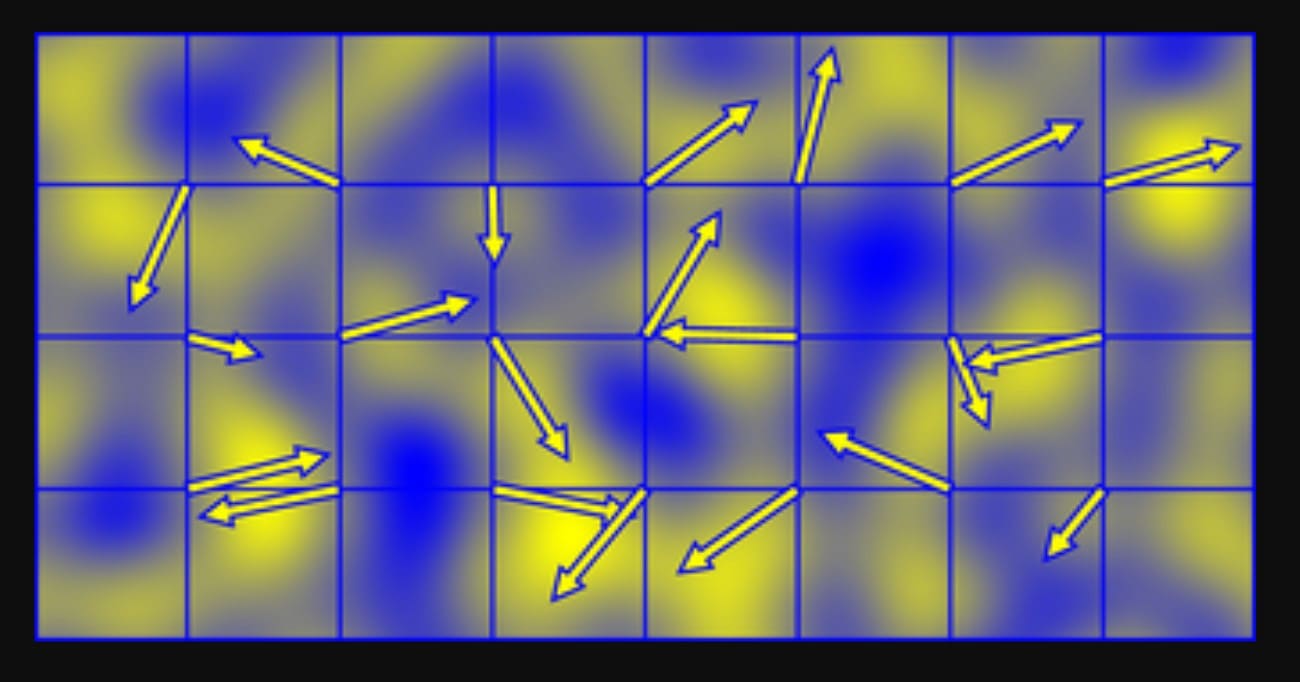

The Perlin noise algorithm works by dividing space into a regular grid in 1D, 2D, or 3D. Each corner of the grid does not store a random number; rather, it stores a pseudo-random direction vector, called a gradient.

When you ask for a noise value at any point, the algorithm looks at the nearby grid corners. It checks how far the point is from each corner, measures how well the corner’s gradient points toward that location, and then smoothly blends all corner influences into one final value.

Step-by-step process (2D Example)

1. Find The Grid Cell:

For a point (x, y), the algorithm first finds which grid cell contains it, and this is done by taking the floor of the coordinates:

The bottom-left corner of the cell becomes (i, j) = (⌊x⌋, ⌊y⌋).

Next, it calculates the position of the point inside the cell:

- xf = x − i

- yf = y − j

Both values stay between 0 and 1.

2. Get Gradients at The Cell Corners:

Each of the four corners of the cell has a unit gradient vector:

- (i, j)

- (i + 1, j)

- (i, j + 1)

- (i + 1, j + 1)

These gradients are chosen using a permutation table. This makes them appear random but remains consistent when the same grid point is used.

3. Measure Corner Influence with Dot Products:

For each corner, the algorithm builds a vector from that corner to the point.

For example, from (i, j) to (x, y), the vector is (xf, yf).

- It then takes the dot product between this offset vector and the corner’s gradient vector.

- If the gradient points toward the point, the value is positive.

- If it points away, the value is negative.

- If it is sideways, the value is near zero.

This tells how strongly each corner affects the final noise value.

4. Smooth Values Using the Fade Function:

Before blending the corner values, a smooth fade curve is applied to xf and yf:

f(t) = 6t⁵ − 15t⁴ + 10t³

This curve flattens at the edges of each cell, which prevents visible seams and keeps the noise smooth across grid boundaries.

5. Interpolate The Corner Results:

First, the algorithm blends the bottom left and bottom right corner values using the faded xf value. It does the same for the two top corners, then blends those two results vertically using the faded yf value. The final result is the Perlin noise value at (x, y), usually between −1 and 1.

Part 4. Perlin Noise Workflow - Game Terrains Example

Perlin noise creates terrain by sampling a smooth noise field over a grid, and each noise value becomes a “height.” Multiple layers add detail, producing mountains, hills, and plains, and the heightmap can control meshes, textures, and biomes in games.

Basic Heightmap Workflow

1. Sample noise over a 2D grid:

- For each grid cell or vertex (x, y), compute:

n = noise(x * frequency, y * frequency) - Low frequency (0.01–0.05) gives broad hills. High frequency gives small bumps.

2. Map noise to height:

- Normalize noise from [-1, 1] to [0, 1].

- Scale to terrain range:

height = minHeight + n * (maxHeight - minHeight) - Store heights in a heightmap array.

3. Build the terrain mesh:

- In Unity, Unreal Engine, or other engines, assign height values to terrain or mesh vertices.

- The engine creates smooth hills and valleys.

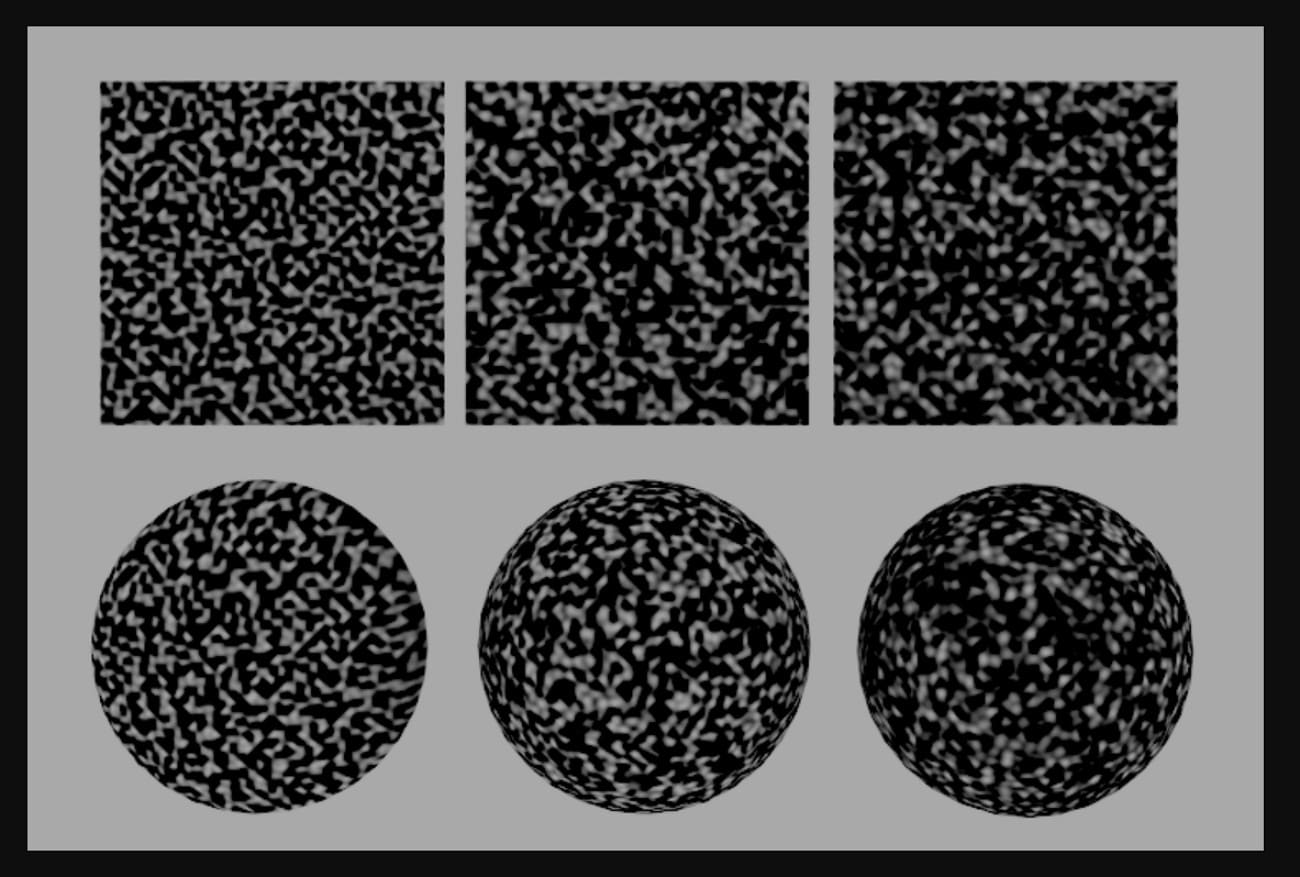

Multi-Octave / Fractal Terrain

Single-layer noise looks flat. Multiple octaves give detail.

Steps:

For each octave, sample noise at a higher frequency and lower amplitude:

- value += noise(x * freq, y * freq) * amplitude

Multiply frequency by lacunarity (≈2.0) and amplitude by persistence (≈0.4–0.6).

Result:

- Low-frequency octaves create large landforms.

- High-frequency octaves add small bumps and realistic detail.

Terrain Types from Height

Assign terrain types based on height:

- < 0.3 → water

- 0.3–0.4 → beach

- 0.4–0.7 → grassland

- > 0.7 → rock/snow

Assign colors or textures in the same way (e.g., blue, green, grey, white).

Common Game Patterns

- Static Worlds: Generate a full heightmap at startup and assign it to terrain or mesh.

- Infinite/Chunked Worlds: Use world coordinates plus a seed. Chunks are created on the fly but remain consistent.

- Biomes and Features: Combine multiple noise maps (height, temperature, moisture) to place forests, deserts, lakes, and other terrain features.

Part 5. Perlin Noise vs Simplex Noise

Both simplex vs Perlin noise were made by Ken Perlin to fix the artificial look of computer graphics. Simplex noise was created to improve Perlin noise by working faster in higher dimensions and removing grid-like patterns.

Conceptual Comparison: Square vs. Triangle

Ideally, Perlin and Simplex noise use coherent gradient noise. They place random gradients on a grid, calculate dot products with offsets, and blend values smoothly.

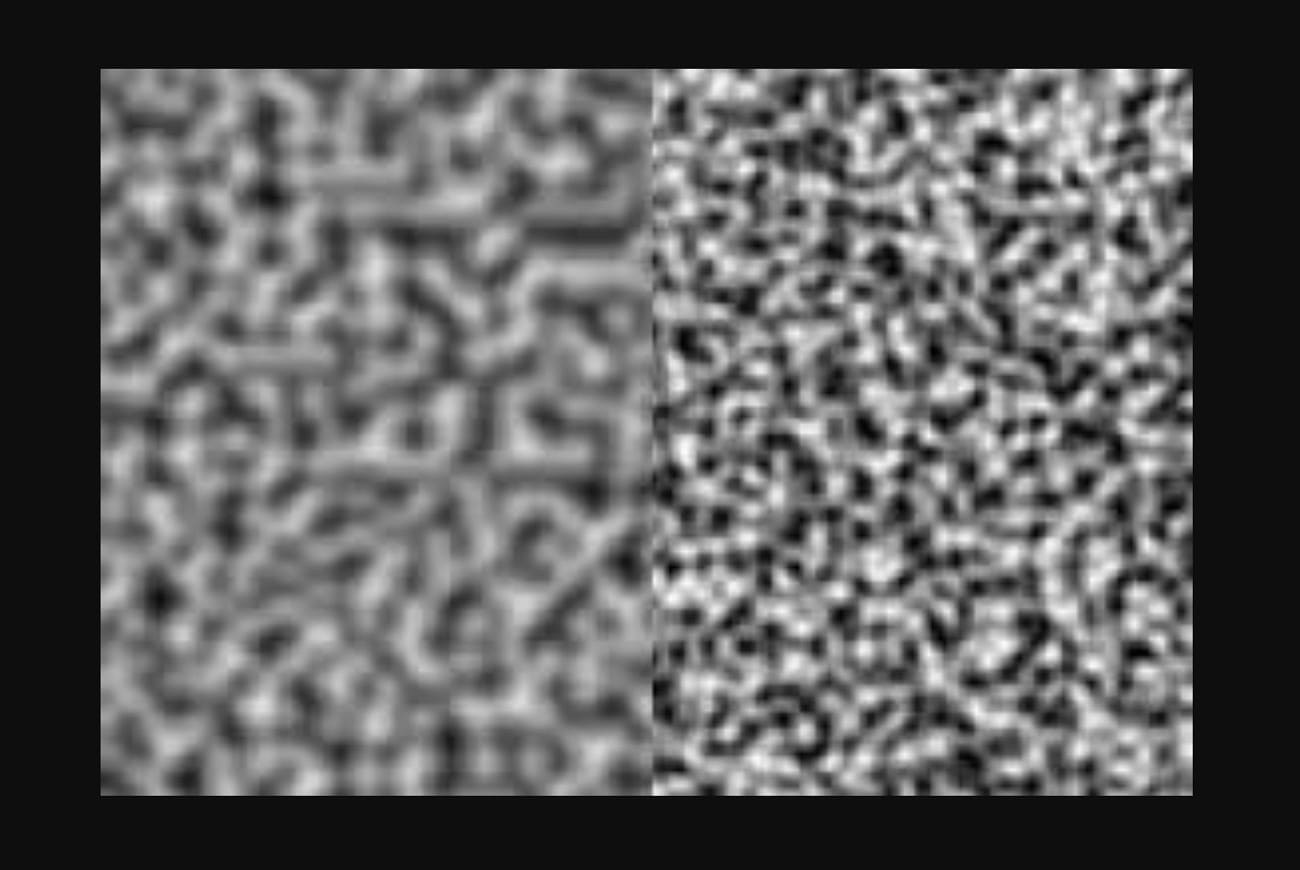

Lattice Structure

- Perlin Noise: Uses a regular grid like squares in 2D, cubes in 3D, hypercubes in higher dimensions, and each point blends the nearest 2ⁿ corners.

- Simplex Noise: Uses simplices like triangles in 2D, tetrahedra in 3D, and each point blends only n+1 corners.

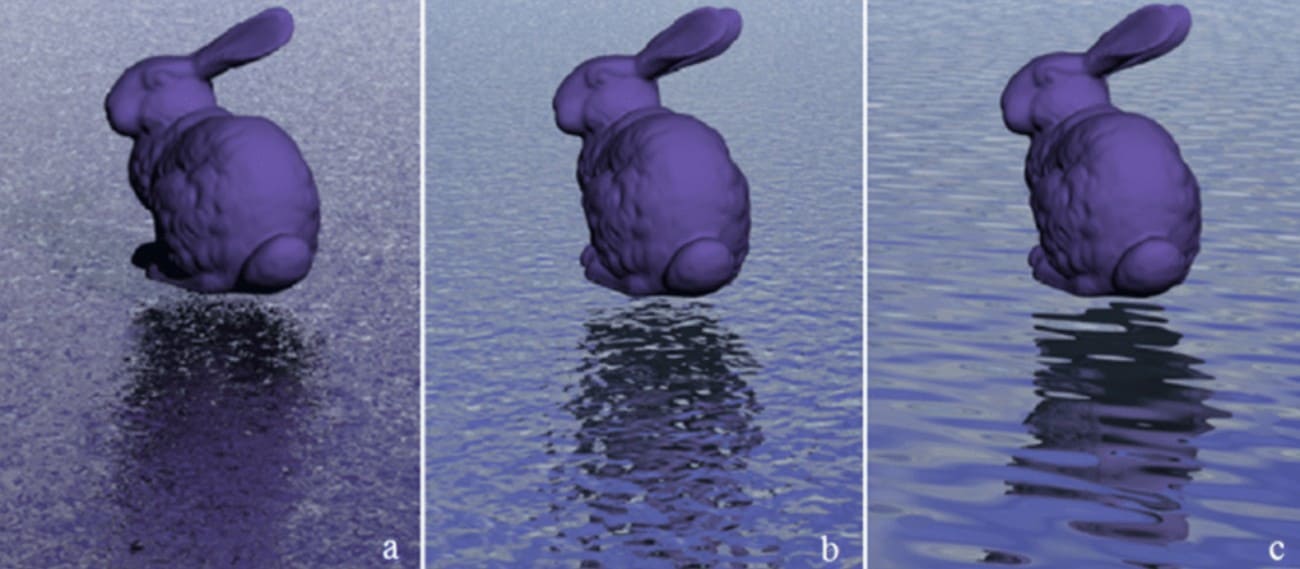

Visual Look

- Perlin Noise: Can show grid-aligned or directional patterns, especially at low resolution or high frequency.

- Simplex Noise: Looks more uniform in all directions, with hexagonal features and fewer visible patterns.

Performance Comparison

|

Feature |

Perlin Noise |

Simplex Noise |

|

Complexity |

Uses all 2^n corners of the nD hypercube. |

Uses only n+1 simplex corners in nD. |

|

2D Performance |

Very fast (built into many engines). |

Slightly faster or comparable. |

|

4D+ Performance |

Very slow; heavy memory/CPU load. |

High performance; ideal for time-based 4D. |

|

Hardware Use |

More complex to implement in GPUs. |

Designed for easy hardware implementation. |

|

Best-suited Scenarios |

Learning simple 2D/3D patterns, when artifacts are acceptable. |

High‑dimensional noise, artifact‑sensitive visuals, volumetrics, and real‑time with many samples. |

Summary: When to Choose Which?

Use classic Perlin noise when you primarily work in 1D, 2D, or 3D and do not require extreme performance. It provides a simple, well-documented algorithm with many examples in games, shaders, or quick prototypes.

However, use Simplex noise when you need 3D, 4D, or higher noise for volumetric effects or animated fields, and speed matters. It also produces smoother, more uniform patterns with fewer directional artifacts.

Part 6: Applications of Perlin Noise

Perlin noise is more than an art tool and is a key algorithm used in many fields to create smooth, natural-looking patterns that mimic real-world randomness.

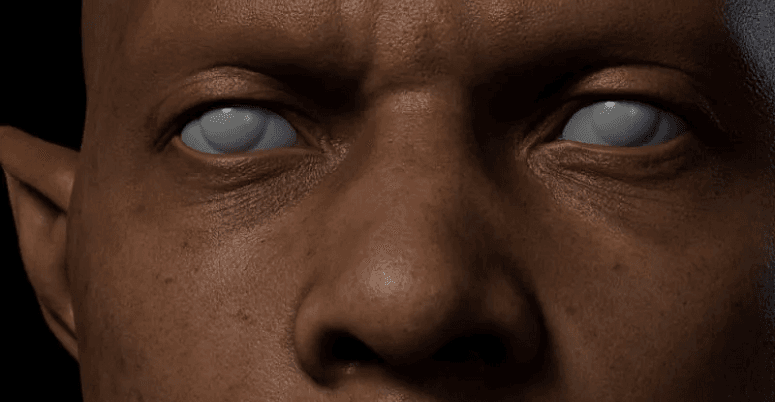

1. Film and Animation

Perlin noise is used to generate realistic textures on various objects such as clouds, smoke, fire, water, and other natural effects in films and animation works. Perlin noise enables artists to make complex surfaces without detailing each detail by hand, and saves time and increases visual realism.

Examples are Star Wars visual effects, Tron textures, and fluid simulations in films such as Finding Nemo by Pixar.

2. Video Games

Game developers use Perlin Noise to generate terrain, landscapes, caves, and procedural worlds. It is also used to generate material textures, simulate surfaces of water, and introduce natural variability to objects and environments.

As examples, the terrain generator of Minecraft, the planetary surfaces of No Man's Sky, and the natural textures of Fortnite or The Witcher series.

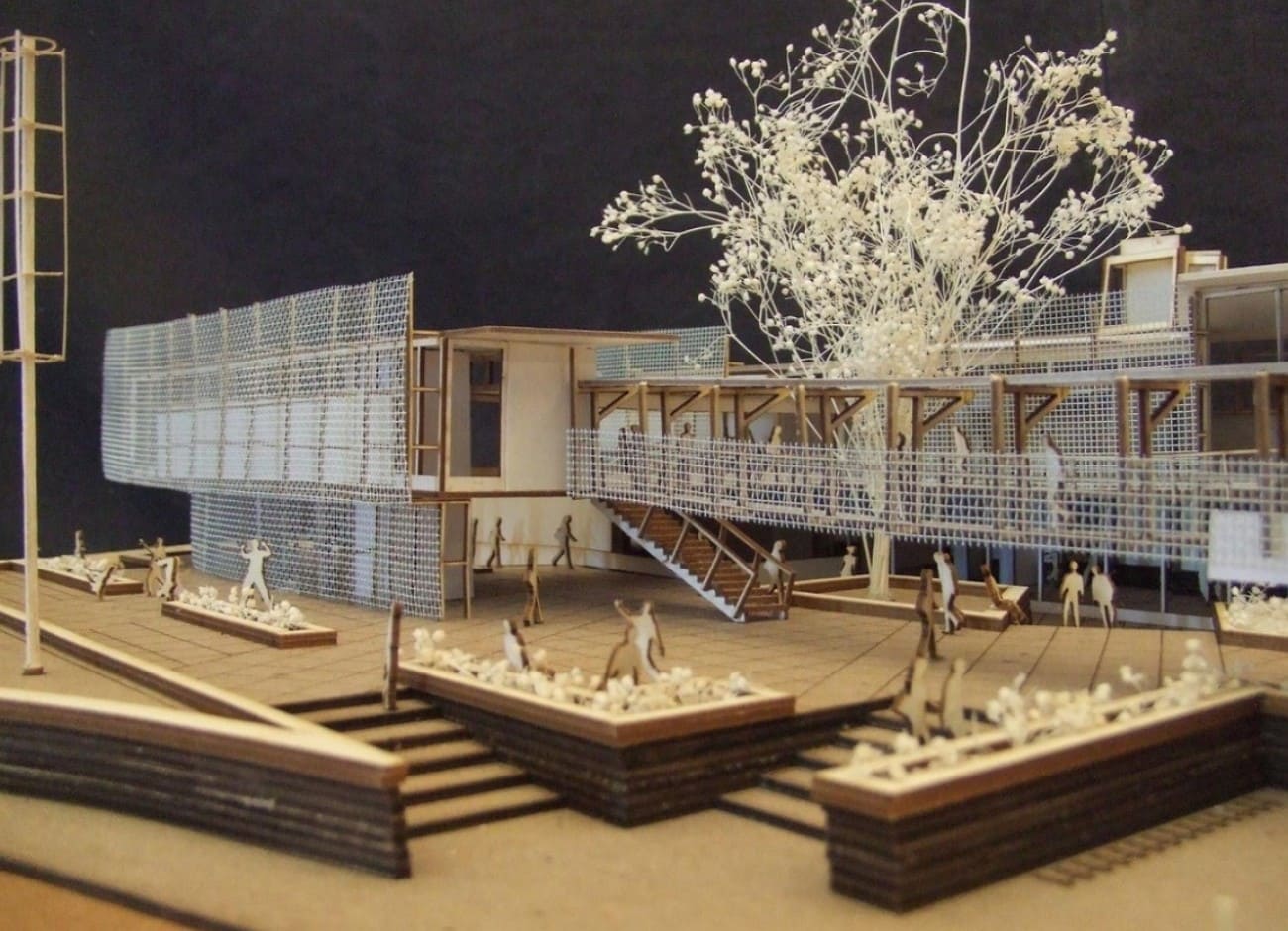

3. Architecture and Design

Architects and designers have applied the Perlin noise to generate realistic procedural textures on building materials, including wood, marble, and stone. It is also useful in the modeling of landscapes and the visualization of natural environments.

Examples are procedural wood and marble surfaces in architectural renderings, realistic garden or park models, and interior design models.

4. Simulation and Scientific Visualization

In simulations of clouds, fluids, fire, and other natural phenomena, Perlin noise generates realistic patterns. It is also used in medical imaging, meteorology, and environmental simulations to add natural variation.

For example, weather simulations, fluid dynamics studies, and visualizations of ocean waves or smoke in research and training software.

5. Art and Creative Coding

Digital artists use Perlin Noise to create generative art, procedural patterns, and dynamic visual effects. It enables controlled randomness to produce visually appealing results.

Examples include Processing sketches for flowing patterns, digital wallpapers, procedural textures in Adobe After Effects, and interactive web art using p5.js.

Part 7. How Perlin Noise Impacts Rendering Performance

In real production workflow, when perlin noise is used extensively in procedural textures, volumetric effects, and displacement-driven surfaces, its impact on rendering performance becomes significant.

In practical projects, Perlin noise is rarely applied as a single layer. Artists often combine multiple octaves to increase detail, drive displacement maps for terrain, or control volumetric density for clouds, smoke, and fog. Each added layer increases shader complexity, sampling frequency, and memory usage during rendering.

For volumetric effects, Perlin noise is frequently evaluated many times along each ray through the volume. This ray-marching process dramatically raises render time, particularly in high-resolution frames or animation sequences where consistency across frames is critical.

This brings several common challenges like longer render times per frame, GPU memory pressure, and reduced efficiency. To handle these of Perlin-noise-based scenes, many studios and independent creators turn to cloud rendering solutions.

Fox Renderfarm provides large-scale GPU and CPU cloud rendering resources designed for complex 3D scenes and procedural workflows. By distributing frames and tasks across high-performance render nodes, this render farm allows artists to process heavy volumetric effects, procedural terrains, and noise-driven materials without being constrained by local hardware limitations.

For projects that rely heavily on Perlin noise, such as cinematic visual effects, large environments, or animation sequences, cloud rendering helps shorten render times while preserving visual quality.

Conclusion

To sum up, Perlin noise is a powerful technique that adds natural, smooth patterns to digital creations. Since this guide has compared it with Simplex noise, understanding both algorithms helps creators choose the right method.

Additionally, for accurate and efficient rendering, using a trusted cloud rendering service like Fox Renderfarm is highly recommended.