Ambient Occlusion: What It Is, How It Works, and When to Use It

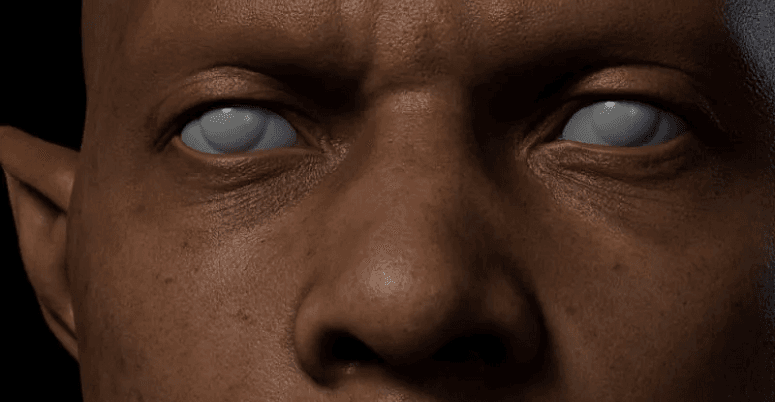

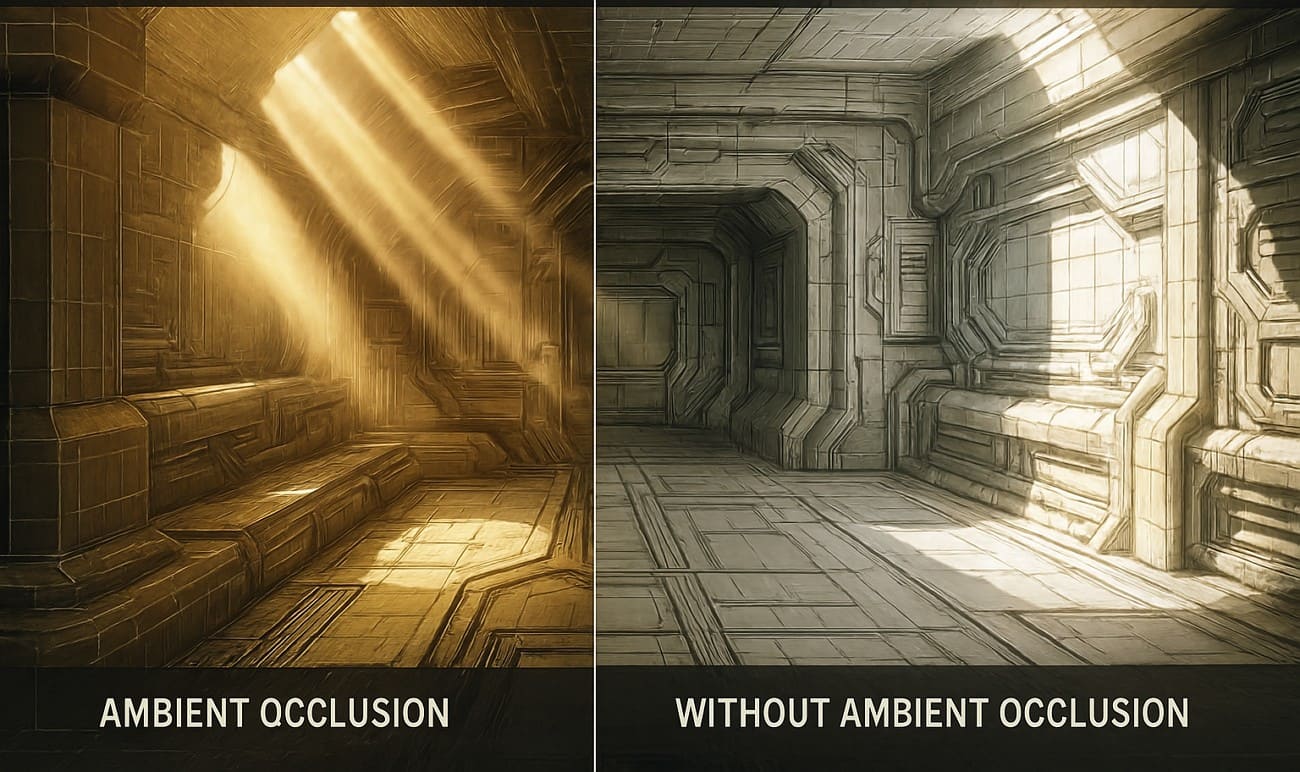

Have you ever seen 3D objects look flat or fake, even under full light? This happens because scenes can miss subtle contact shadows in areas where light barely reaches. In this case, the ambient occlusion will resolve this issue by introducing depth and authenticity to each scene.

It adds gentle shading in tight areas and keeps open surfaces brighter, which makes scenes feel more natural. This tutorial describes what ambient occlusion is, how it functions, and why and where it is best applied to sharpen and enhance the realism of the 3D images you create.

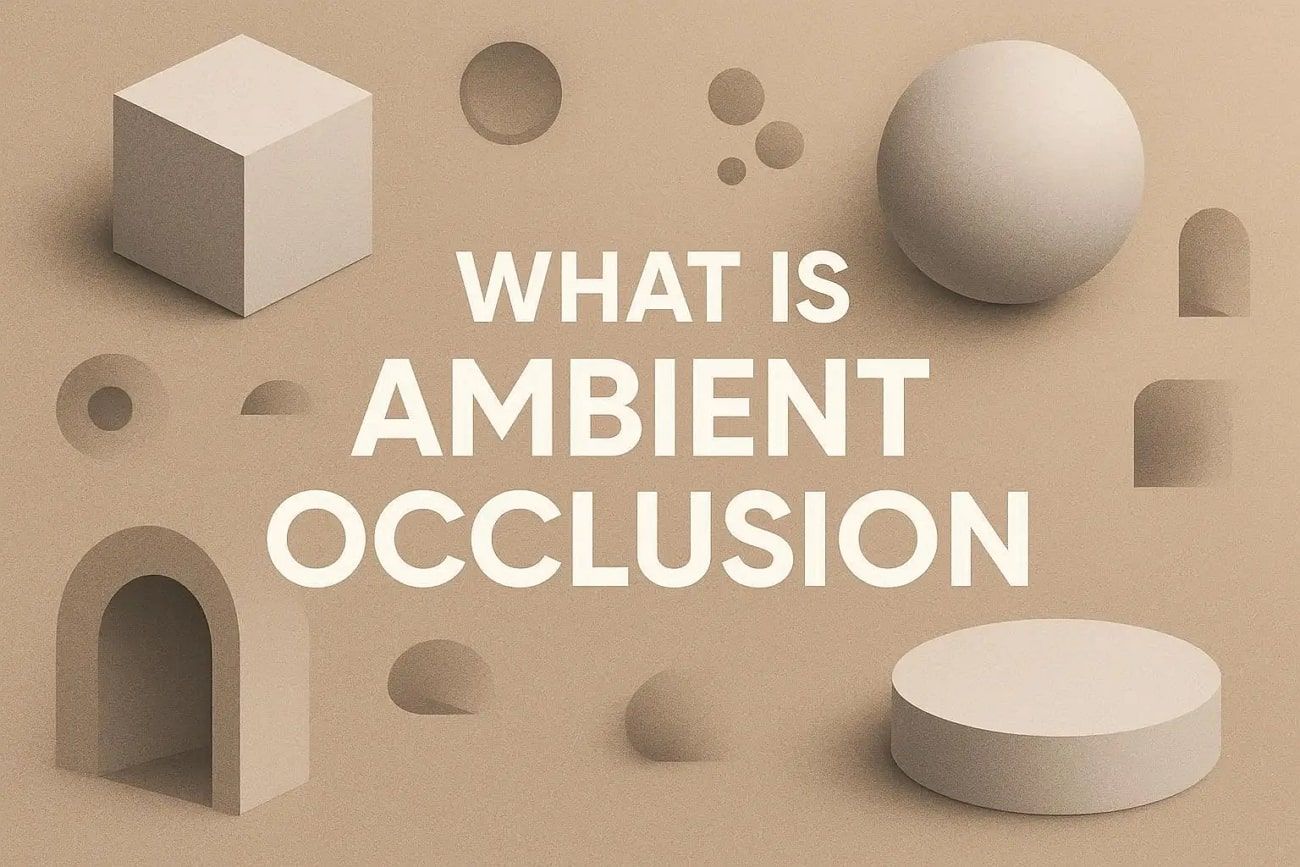

Part 1. What is Ambient Occlusion in 3D Graphics?

Ambient occlusion is a shading method in 3D graphics that darkens corners, creases, and tight spaces where light cannot reach. This makes models and scenes look more real and three-dimensional without heavy rendering. It stops scenes from looking flat and grounds objects naturally.

Besides, ambient occlusion is cheaper than full global illumination, so it is common in games, visualizations, and VFX to improve realism at low cost. Plus, it often works with physically based materials to highlight edges, panel gaps, fabric folds, and brick joints. Artists usually keep the effect subtle, and a strong AO appears artificial or unnatural, whereas a light touch adds natural depth and realism.

Part 2. How Does Ambient Occlusion Work?

To understand its effect, you may ask, what does ambient occlusion do? It creates soft shadows in corners, creases, and tight spaces where light cannot reach. This gives 3D models and scenes more depth, making them look realistic. Areas blocked by nearby objects appear darker, while open surfaces remain bright, adding natural contrast without heavy rendering.

Core Principle and Computation Steps

The system calculates how much ambient light reaches each point by checking multiple directions around it. So, the fraction of blocked light forms an occlusion factor, which reduces light in cramped areas and keeps open areas bright.

Screen-Space Ambient Occlusion (SSAO)

In real-time graphics, Screen Space Ambient Occlusion uses depth and normal from the rendered image to create fast, realistic shadows. This enhances visual detail while keeping performance cost low.

Part 3. What is the Difference Between Ambient Occlusion and Global Illumination?

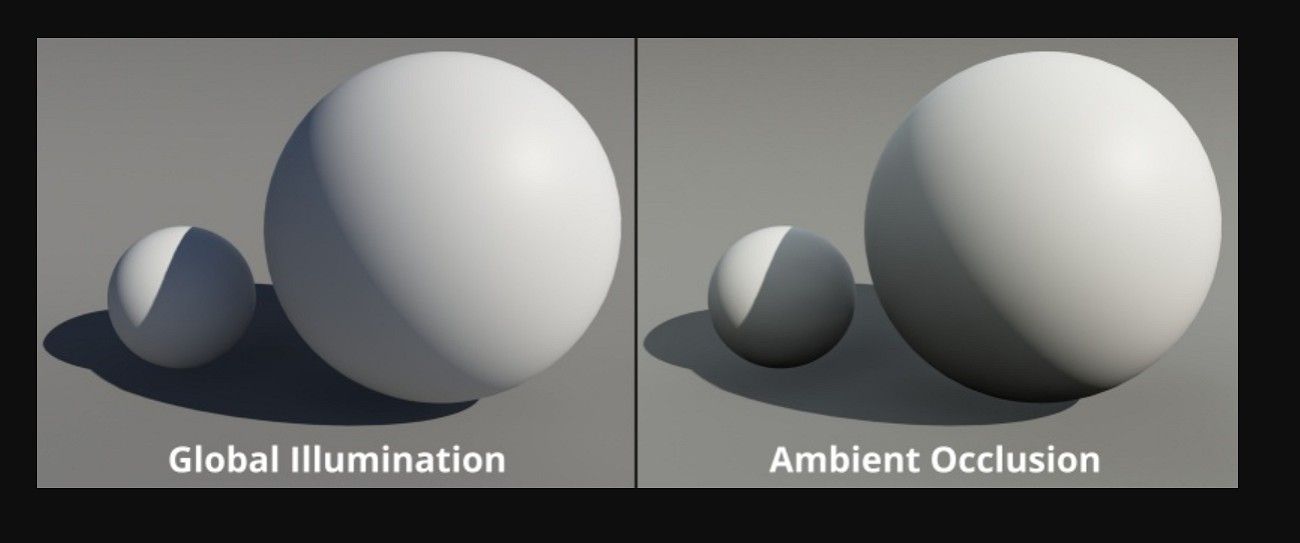

Both in computer graphics, ambient occlusion and global illumination help to render a 3D scene more realistic by displaying the effects of light on surfaces. Global illumination simulates indirect light bounces across a scene, while ambient occlusion only estimates how much ambient light is blocked in corners and tight surface areas.

Light occlusion in ambient occlusion provides soft shadows along corners, creases, and tight spaces, providing depth and a more three-dimensional appearance to objects. Ambient occlusion is faster and simpler, while global illumination gives full, realistic lighting but requires more computation.

What Does Ambient Occlusion Do?

Ambient occlusion darkens corners, creases, and tight spaces where ambient light cannot reach. It does not simulate full light bounces but only changes how much ambient light reaches a point, adding soft shadows that give depth and contrast. Besides, it does not create color bleeding or real indirect light like global illumination.

What Does Global Illumination Do?

Global iIllumination models full light transport across a scene, including indirect bounces, reflections, refractions, and diffusion, depending on the renderer. It takes indirect light, softened shadows, and color bleeding to render scenes realistic. It also attempts to reproduce light in the real world on the entire scene, usually by techniques such as path tracing or radiosity, light probes, or baked global illumination.

Key Differences at a Glance

|

Aspect |

Ambient Occlusion (AO) |

Global Illumination (GI) |

|

Main Purpose |

Enhance depth via soft contact shadows in occluded areas. |

Simulate full indirect lighting and light transport in the scene.

|

|

What It Models |

How much ambient light is blocked locally. |

How light bounces, spreads, and changes color globally. |

|

Light Bounces |

No real bounces; just darkening. |

Multiple bounces and interactions between surfaces. |

|

Visual Effects |

Local soft shadows increase under objects and in corners. |

Indirect light, color bleeding, soft global shadows, and overall light balance. |

|

Cost & Usage |

Relatively cheap; widely used in games as a post-effect.

|

More expensive; common in films, archviz, and higher‑end or hybrid game renderers |

How They Work Together

Ambient occlusion is often applied on top of global illumination to add light occlusion in corners and creases that global illumination may miss. Additionally, ambient occlusion complements global illumination by enhancing small details rather than replacing it.Part 4. What are the Different Types of Ambient Occlusion?

Ambient occlusion has several types that differ by calculation method and cost. Some types suit offline work, while others suit real-time use. Most fall into two main groups: baked AO for static scenes and real-time AO methods, such as screen space, voxel, or ray-traced ambient occlusion. These options balance visual quality and performance based on project needs.

1. Baked/Precomputed Ambient Occlusion

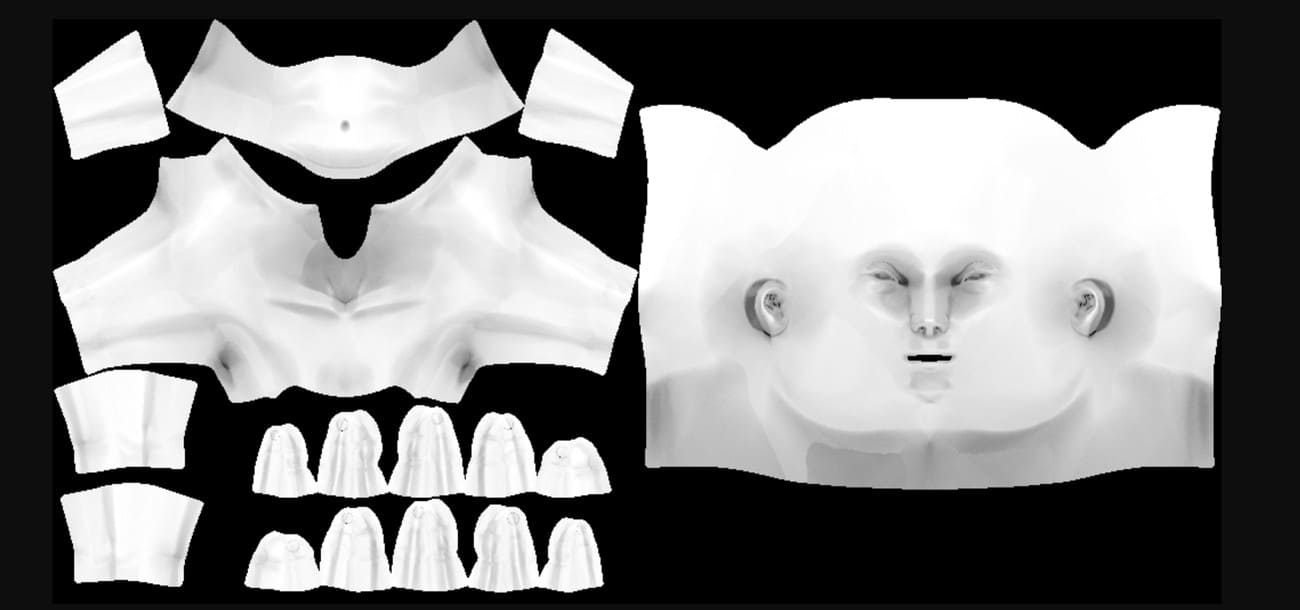

Baked/Precomputed ambient occlusion is calculated offline and saved in textures or vertex colors. During render time, the system applies it to the material to add depth. This method suits static objects and scenes, such as buildings and props. It costs very little at runtime and provides stable, high-quality contact shadows.

2. Screen Space Ambient Occlusion (SSAO)

Screen Space Ambient Occlusion is the original standard where ambient occlusion uses depth and normal data from the rendered frame. It checks nearby pixels to darken corners and creases. Ambient occlusion in games often uses SSAO because it works in real time as a post-process. It may look noisy or less accurate, since it only uses what appears on screen.

3. Horizon‑/High‑Definition Ambient Occlusion (HBAO/HDAO)

HBAO (NVIDIA) and HDAO (AMD) are advanced SSAO methods that use better sampling and greater detail for smoother, more accurate occlusion. They produce softer and cleaner shadows than basic SSAO but use more GPU power, so games often offer them as optional graphics settings.

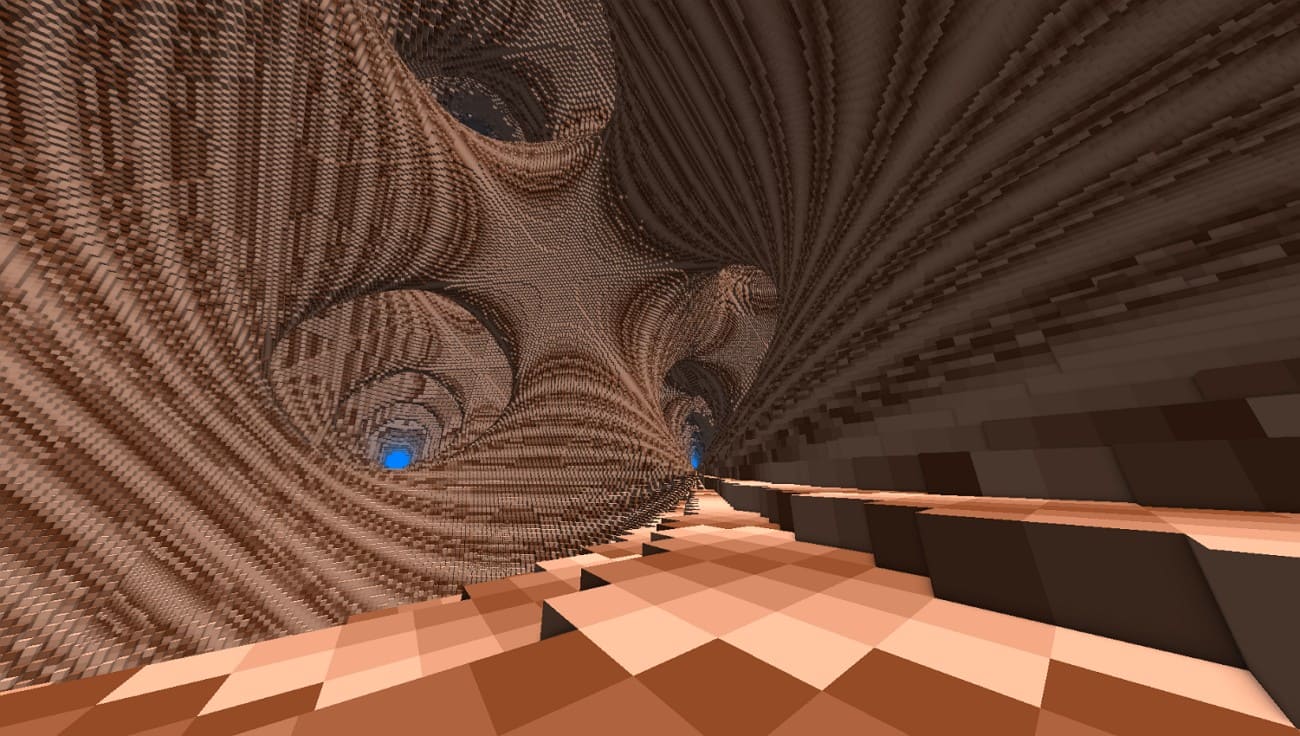

4. Voxel Ambient Occlusion (VXAO)

VXAO turns scene geometry into a 3D voxel grid to estimate how ambient light is blocked, even by objects outside the screen. It looks more realistic than SSAO and feels closer to full global effects, but it uses much more power, so only a few high-end games use it.

5. Ground‑Truth/Ray‑Traced Ambient Occlusion (GTAO / RTAO)

Ground-Truth Ambient Occlusion (GTAO) and Ray-Traced Ambient Occlusion (RTAO) use more realistic sampling or hardware ray tracing to produce accurate ambient occlusion. These methods respect true scene geometry and reduce visual artifacts, but they require more computation and usually appear in modern engines with ray tracing support.

Part 5. Should You Turn Ambient Occlusion On or Off?

Turn Ambient Occlusion on if you want better depth and more realistic visuals, and your system can handle a small performance drop. On the other hand, turn it off or lower it if you need higher FPS or if the effect looks too dark or noisy.

When to Turn Ambient Occlusion On?

Many players prefer this effect in single-player or cinematic games. Ambient occlusion adds soft shadows in corners and where objects touch, which makes scenes look more realistic and less flat. On mid-to-high-end GPUs, the performance drop is often acceptable, around 10-20 FPS in some games. As a result, users usually keep AO on and lower the less noticeable settings instead.

When to Turn Ambient Occlusion off or Too Low?

Competitive players often turn it off to keep frame rates high and controls responsive. Ambient occlusion can cost a lot of performance, especially at high resolutions like 4K, sometimes reducing 10–20 FPS or more. Low-quality or very strong AO can look blotchy or unnatural and may reduce visibility in dark areas. In such cases, turning it off or using a lower, cleaner option makes sense.

Practical recommendation - The "Middle Ground"

-

In single-player or visually detailed games, keep ambient occlusion on Medium or High if your FPS remains smooth.

-

For competitive or performance-focused games, set ambient occlusion to Low or Off, and only enable it if you have plenty of FPS to spare.

Part 6. How is Ambient Occlusion Used in Practice?

Ambient occlusion is used in many fields to add subtle occlusion shadows, depth, and realism without the high cost of full global illumination. How ambient occlusion is applied depends on the content type: real-time use in games or VR/AR, and offline use in film or architectural visualization.

1. Ambient Occlusion in Games

Games usually use screen space ambient occlusion to add shadows in corners, creases, and contact points in real time. This makes worlds look less flat and more realistic. AO appears as a graphics option so players can choose better depth at some cost to performance. It is most noticeable around rocks, foliage, character armor, and objects inside buildings.

2. Ambient Occlusion in 3D Rendering and Animation

In offline rendering for film and animation, ambient occlusion is baked into textures or used as a separate render pass to add material detail and occlusion shadows. Artists add the AO pass to the main render to show folds, gaps, and small surface details like wrinkles, screws, or panel lines. This makes the final image more realistic and detailed.

3. Ambient Occlusion in Architectural Visualization

Architectural visualization artists apply ambient occlusion to highlight connections between walls, floors, furniture, window frames, and exterior details. This helps interiors and exteriors appear solid and realistic rather than floating.

It passes, or maps, are often combined with the main image in Photoshop or similar software to add subtle shadows in corners and edges. This improves contrast and gives still images a natural, photographic feel without complex light calculations.

4. Ambient Occlusion in VR and AR

In VR and AR, ambient occlusion improves the perception of depth and space in virtual scenes while keeping performance costs low. It creates realism without full light calculations. Developers use light AO methods, like optimized SSAO or baked AO, so objects in VR training, product demos, and AR displays appear fixed to surfaces. This makes virtual scenes feel stable, natural, and believable.

Part 7. How to Optimize Ambient Occlusion Without Losing Quality?

Ambient occlusion can be improved by choosing smart methods, adjusting settings, and using a render farm to keep depth and realism without slowing performance.

1. Pick the Right Ambient Occlusion Method

For real-time work, use efficient methods like SSAO or HBAO+ instead of heavy AO, such as VXAO or ray-traced AO, unless you have high-end hardware. SSAO and HBAO give a good balance of quality and speed, while ray-traced AO is best for premium presets.

2. Use Render Farm

Heavy ambient occlusion with high samples or detailed passes can be slow on a single machine. In this case, a render farm could help. Fox Renderfarm processes AO maps or frames on many computers at once, keeping high resolution and clean shadows while reducing total render time. This is useful for large scenes like cities, interiors, or product showcases.

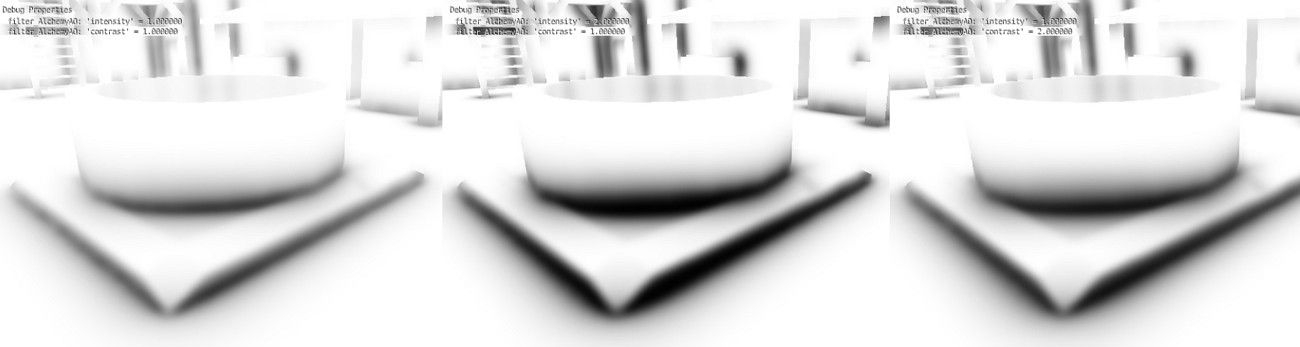

3. Adjust Samples, Radius, and Intensity

Lower ambient occlusion samples slightly and add a subtle blur to reduce noise. Keep the radius moderate and intensity low to medium. Too strong AO looks dirty and wastes GPU power.

4. Use Lower-Resolution AO with Filtering

Compute ambient occlusion at lower resolution, then upscale and blur it. Limit AO to certain areas, such as interiors or dark regions, to save performance.

5. Bake AO for Static Content

For static objects, bake AO into textures or vertex colors. This provides high-quality shadows at runtime without extra cost.

Part 8. FAQs about Ambient Occlusion

1. Is Ambient Occlusion necessary?

It is not strictly necessary, but ambient occlusion adds depth and realism to scenes. It helps objects feel grounded and less flat.

2. Does Ambient Occlusion increase FPS?

No, ambient occlusion usually reduces FPS slightly because it adds extra calculations. Lowering AO settings or turning it off can improve performance.

3. Is Ambient Occlusion CPU or GPU?

Ambient occlusion mainly uses the GPU for real-time effects in games. Some offline AO calculations can use the CPU for baking.

Conclusion

Summing up, Ambient Occlusion adds depth and realism to 3D scenes by creating soft shadows in corners, creases, and contact points. Proper AO choice and optimization keep visuals sharp without heavy performance loss. For high-quality renders, especially with heavy AO passes or animations, using an online solution like Fox Renderfarm ensures fast, clean results while maintaining full detail.