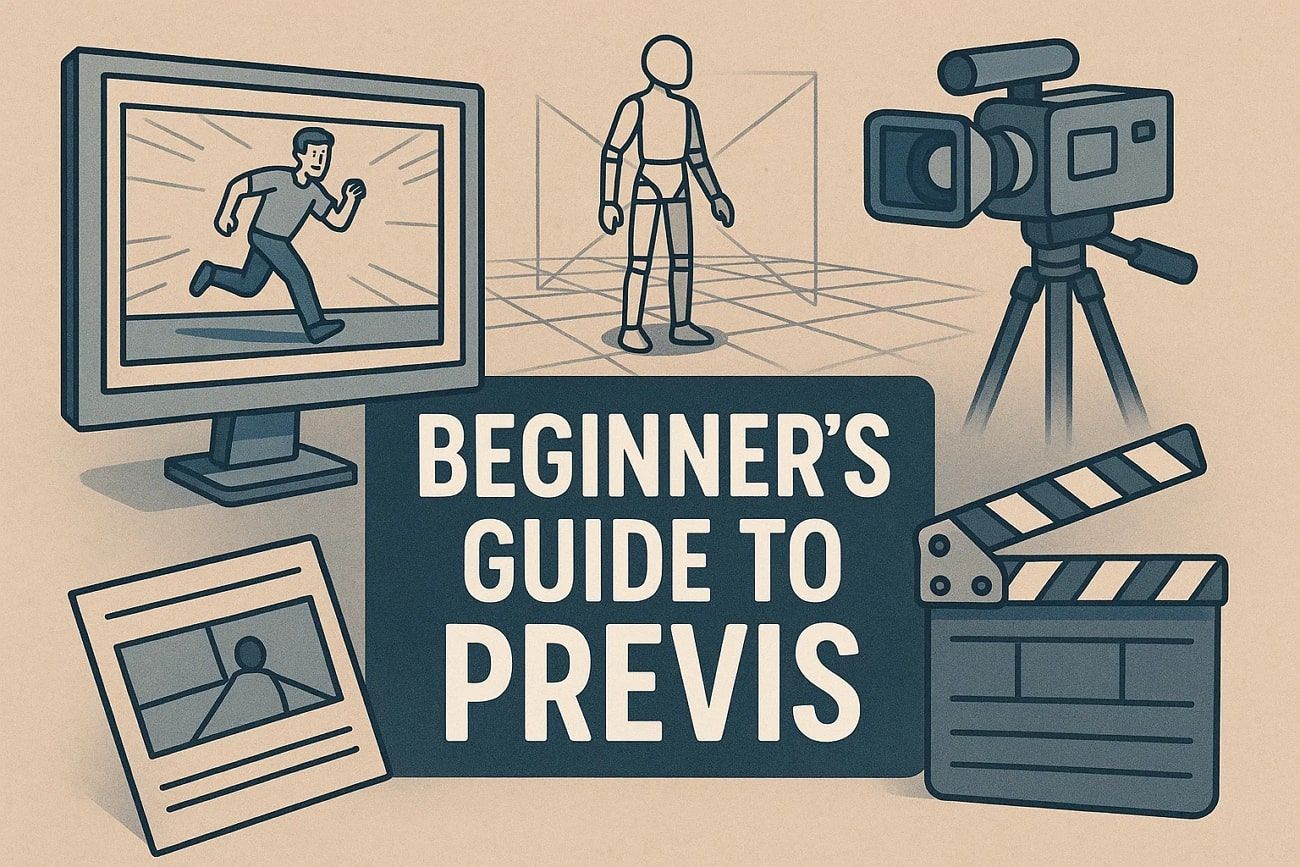

A Beginner’s Guide to Previsualization for Film and Animation

Many beginners in film and animation struggle to bring their ideas to life clearly. They often waste time adjusting the screen, camera angles, or timing during production. This is where previsualization solves the problem and lets creators plan scenes before filming or final animation. Since previs might be new for some beginners, this guide provides an extensive guide to previsualization for film and animation.

Part 1. What Is Pre-Visualization?

Pre-visualization, or called Previs, is a process used in film and animation to plan scenes before production. It helps creators see how shots, camera angles, and sequences will look. For that, it creates a rough version of the scenes, and filmmakers can make decisions early, avoid mistakes, and save time and money.

Above all, previs can be done with simple sketches, storyboards, or basic digital tools. It also helps the entire team understand the vision and work together more effectively.

Part 2. 5 Common Types of Previsualization

Know that previsualization features various types, and some of them are explained below for better clarity:

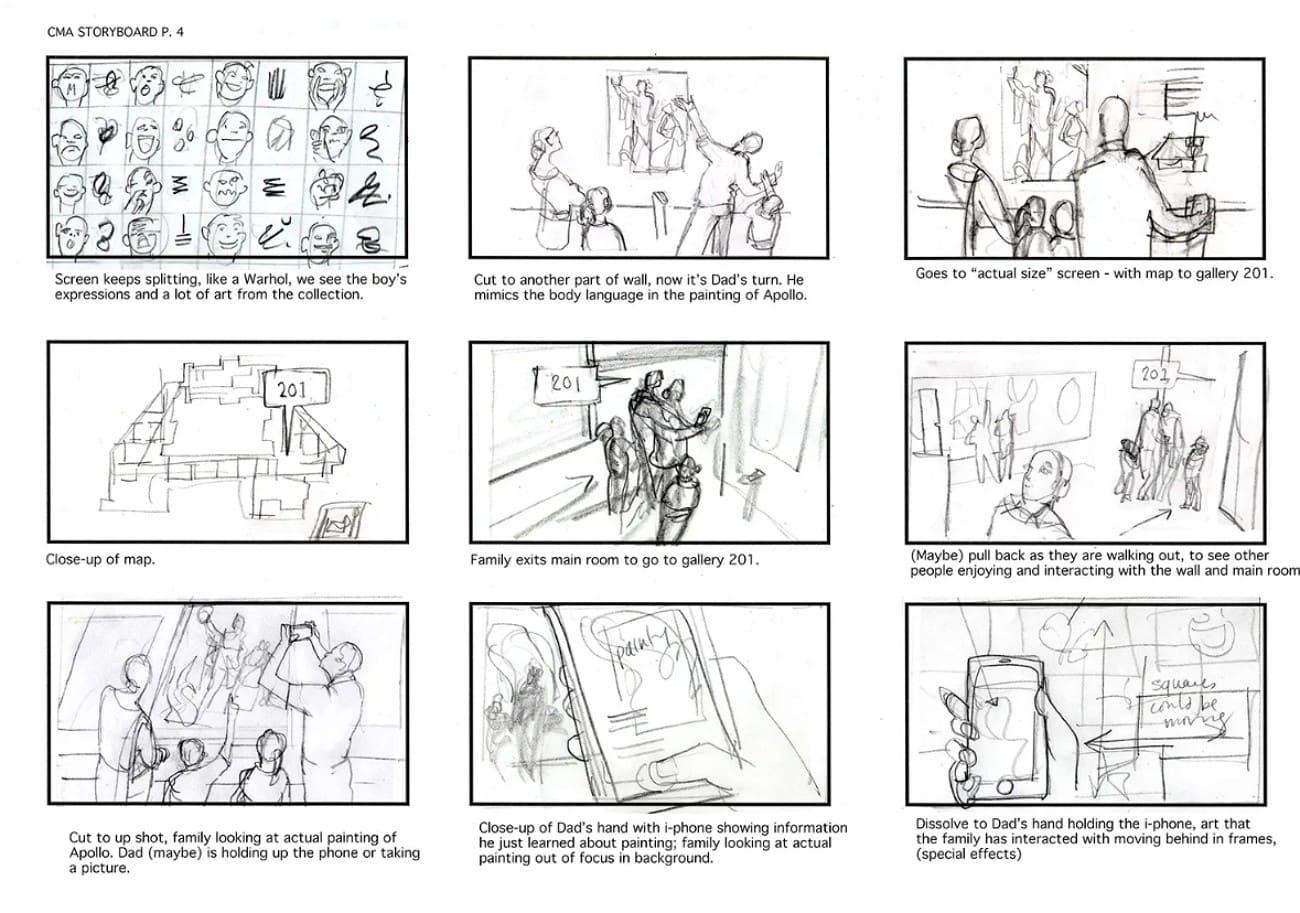

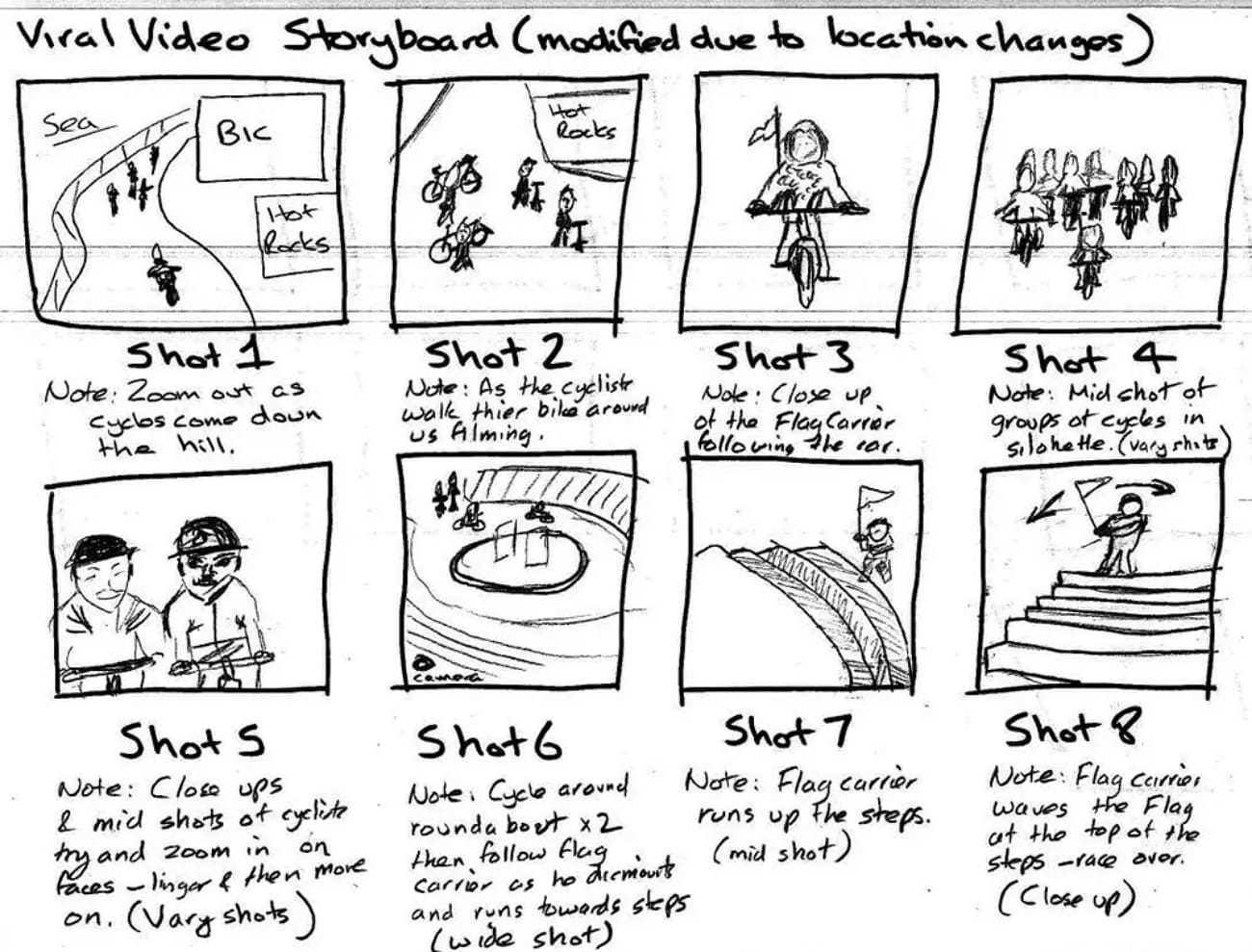

1. Storyboards

Storyboards are a series of drawings or frames that show important moments and camera angles. They usually contain straightforward drawings with comments or captions. There are also concept arts and mood boards, which reveal the appearance, style, and feel of a scene. Storyboards communicate lighting, color, and setting prior to technical planning and assist the team to adopt the visuals and mood of the film or animation.

2. Animatic

As you learn about previs definition, know that an Animatic is a short video made from storyboard frames, rough artwork, temporary sound, and dialogue. This kind of previsualization shows how a scene flows, the rhythm of shots, and timing before expensive 3D or live action work. Hence, directors and editors can see the pacing and order of shots and make changes early.

3. Shot list

Shot list is used to plan all shots in a scene before production and involves creating a detailed list of every camera shot, angle, and framing. Every shot normally contains the kind of shot, position of the camera, choice of lens, and any kind of movement or activity.

Therefore, this kind of previs is a process to assist the director, cinematographer, and crew as they know what to shoot, nothing is missed, and time is saved on the set.

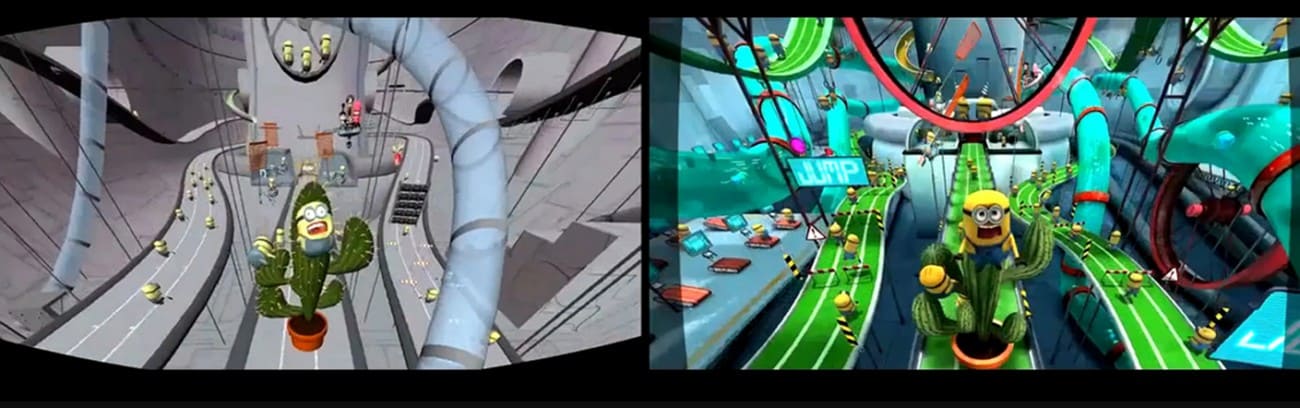

4. 3D Previs

3D previs uses simple 3D models, cameras, and virtual sets to plan movement, camera paths, and complex action. It allows the team to test lens choices, scene layout, and interactions of effects. Thus, this creates precise storyboards and shot lists for production.

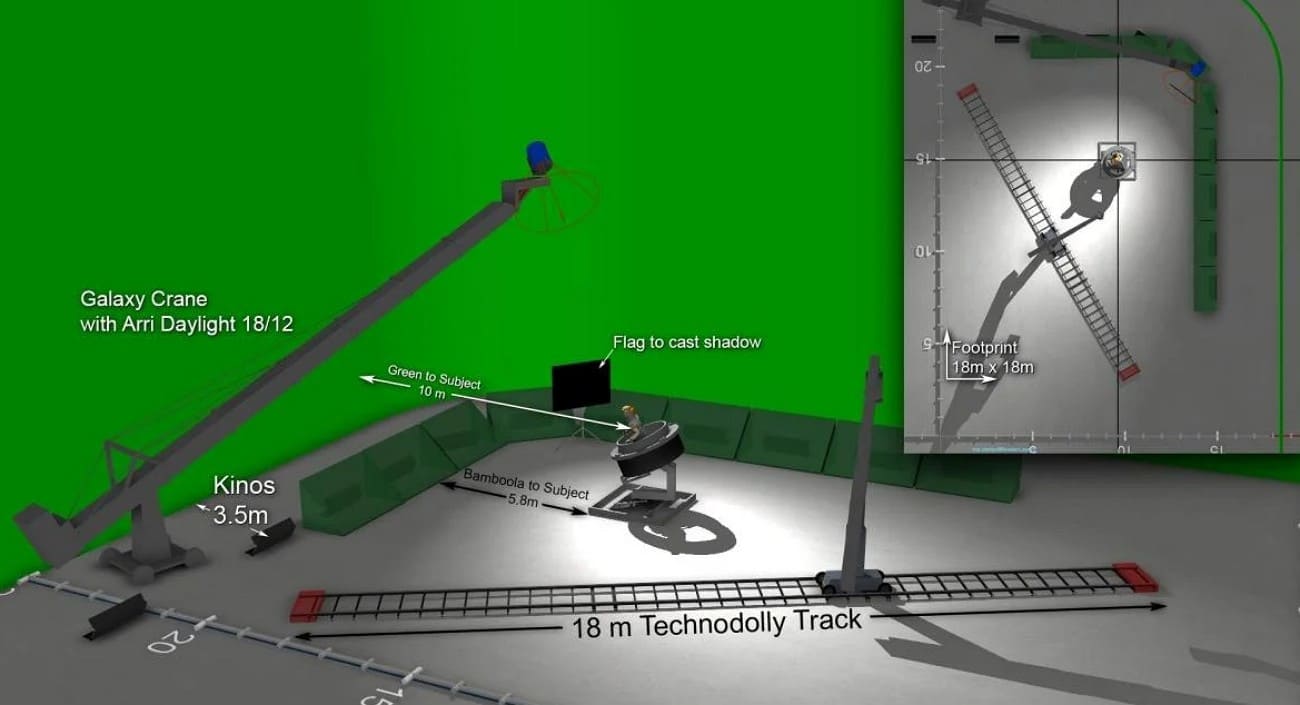

5. Techvis

Unlike others, Techvis converts previs into technical data such as camera positions, motion paths, crane heights, and green screen layouts. It ensures planned shots can be executed safely on set and guides equipment setup. Hence, techvis helps the crew avoid costly errors by showing what is possible with the tools and space available.

Part 3. Key Tools & Software for Previsualization

To create effective scenes, it's important to know which previs tools and software are essential for every project. Therefore, this section lists 5 popular softwares with detailed guidance on what makes them best for previsualization:

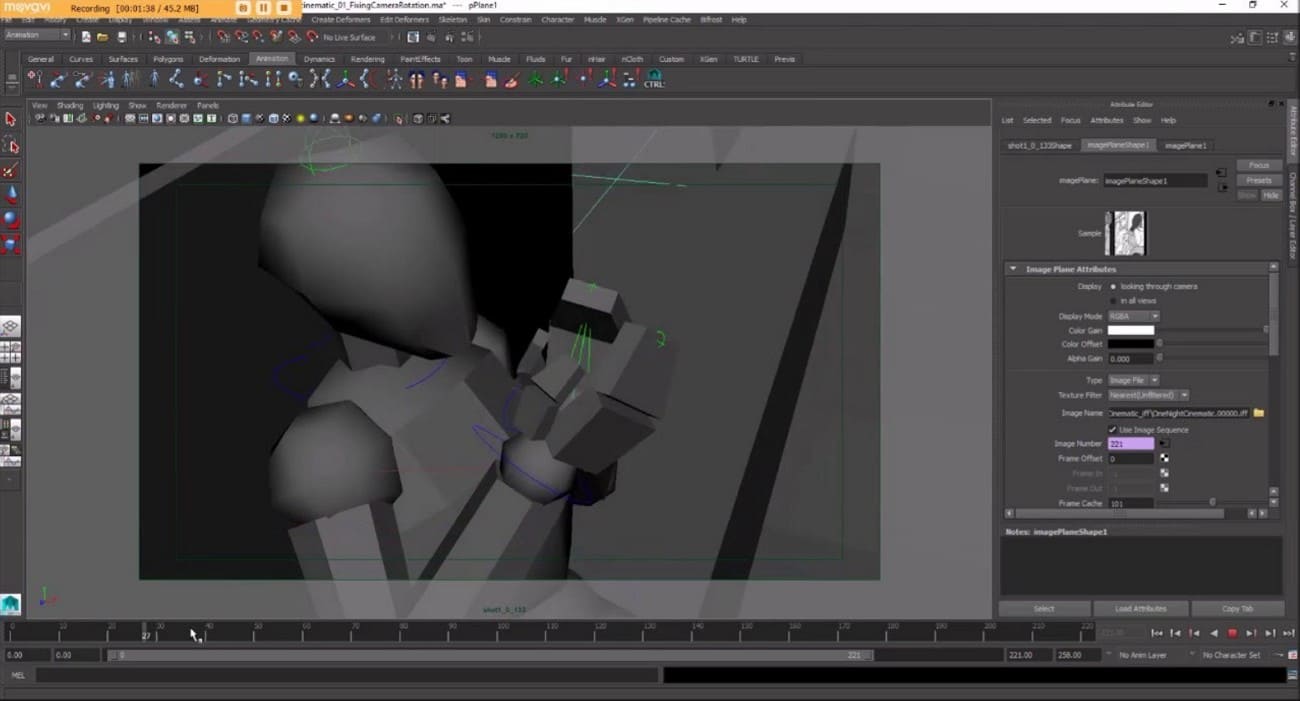

1. Autodesk Maya

This previsualization software includes Camera Sequencer tools, enabling previs artists to block out multiple shots. Through this tool, users can even switch angles and cut simple edits directly in 3D without leaving the software. Its animation toolkit, including motion-path and mocap workflow, makes it easy to block character and camera motion. OpenUSD support and a strong plugin ecosystem mean previs scenes and cameras can move smoothly.

2. Storyboarder

Storyboarder is built specifically for fast story exploration, allowing you to sketch boards as simple stick figures. The integrated Shot Generator in this pre-visualization tool lets you create simple 3D scenes with characters, props, and cameras. Essentially, it auto-creates boards from predefined shot types, which is ideal for rapid previs. Besides, you can set panel durations, add dialogue captions, and play back scenes as basic animatics.

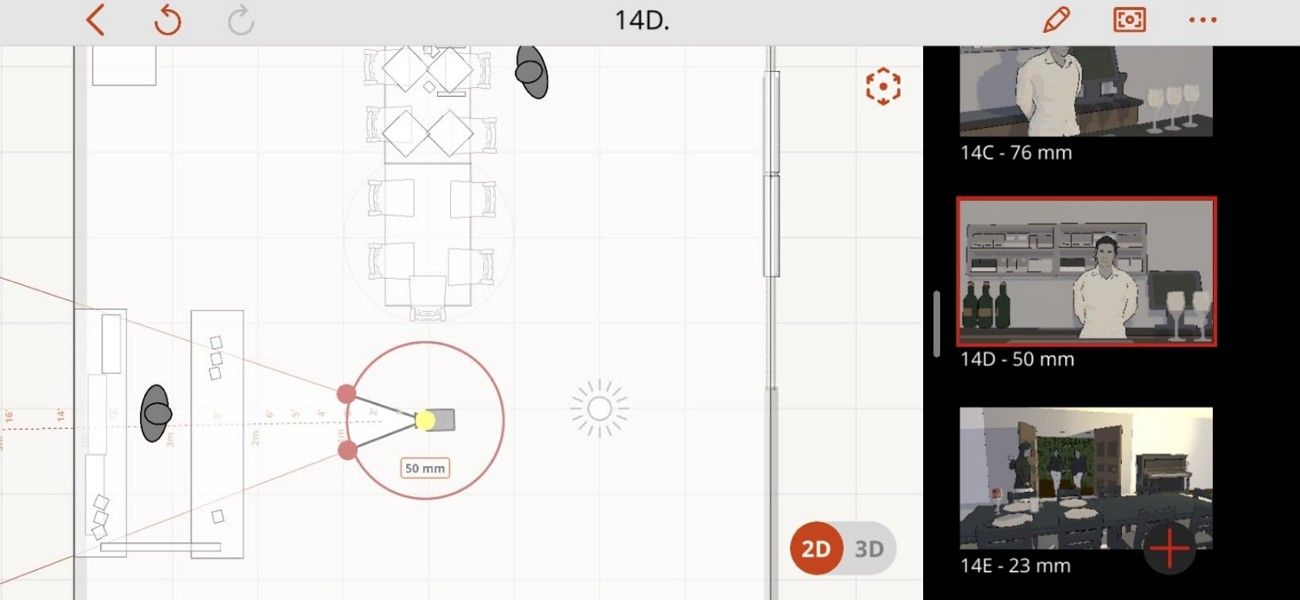

3. Previs Pro

A program that completely suits previs definition because it allows you to build complete 3D storyboards with characters and props. Its animatics tool adds motion, timing, and pacing; you can turn a static board into a production-ready previs video. Surprisingly, AR virtual cameras and LiDAR imports let you drop virtual characters and sets into real locations. You can also incorporate 3D models, generate props with AI, and export scenes to Unreal.

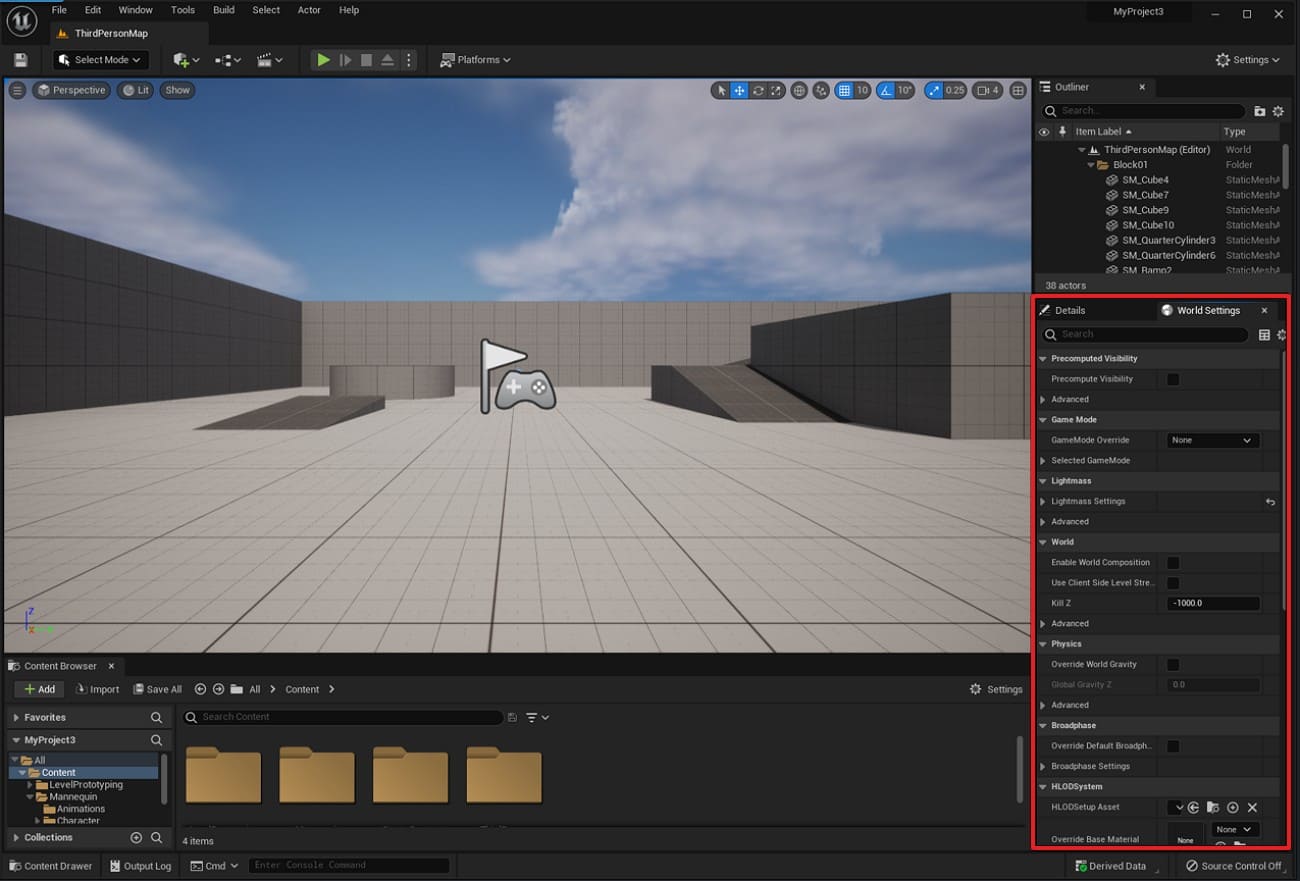

4. Unreal Engine

Another pre-visualization program whose Cinematic camera actors and virtual camera (VCams) simulate real lenses and sensor sizes. Even its character tool makes it easy to build believable 3D sets where you can experiment with staging. Virtual production workflows and virtual scouting mean previs scenes can flow forward into in-camera VFX. Its Sequencer timeline acts as a built-in non-linear editor, letting you freehand edit.

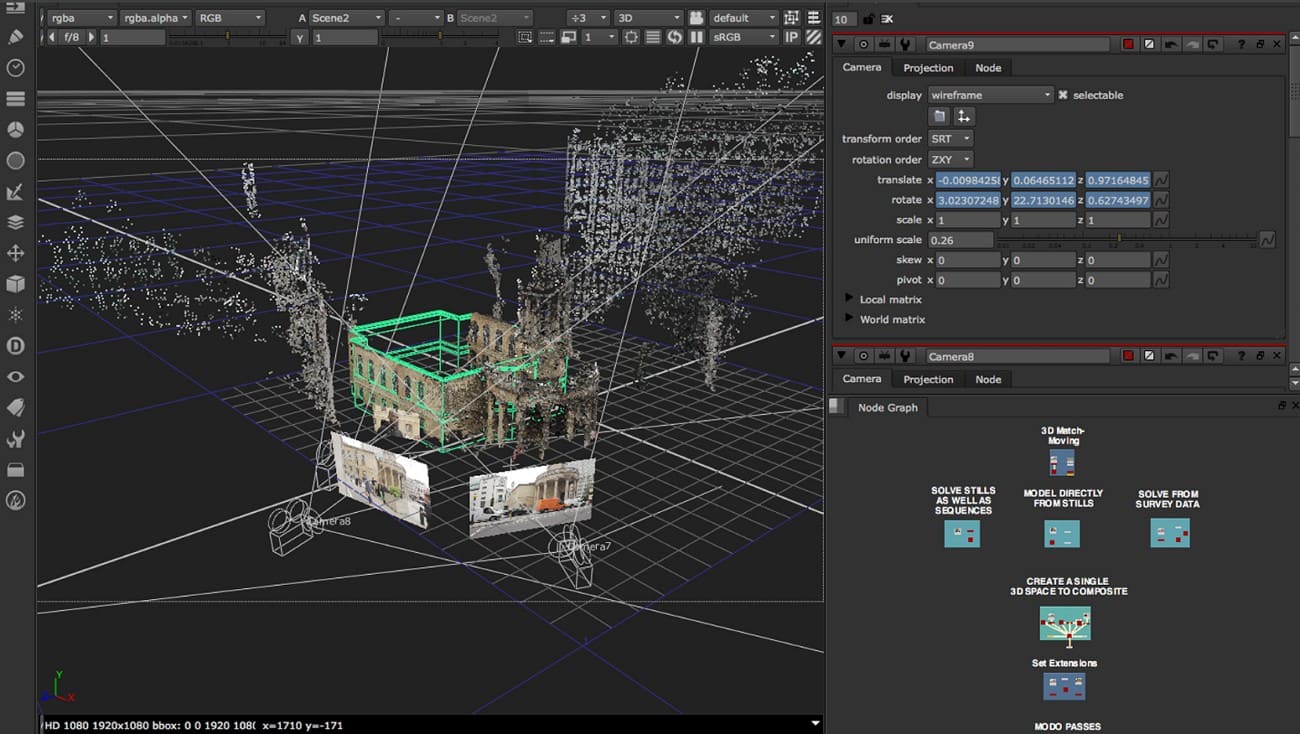

5. Nuke

Over 200 compositing nodes make it easy to combine CG previs renders and temp matte painting into editable shot mockups. Nuke Studio/Hiero's multi-shot timelines allow teams to manage entire sequences and temp VFX across many previs shots at once. Customization via Python API enables studios to build previs-specific templates, keeping sequences visually consistent. Moreover, integrated 3D space allows artists to explore staging and lens choices before full 3D composition.

Part 4. How to Make a Previsualization (Beginner Guide)?

Now that you know the tools, let’s explore how to create a pre-visualization in simple steps mentioned ahead and demonstrated in this video:

1. Clarify the Idea

The first step is to review your script or outline and determine what parts require previsualization. Subsequently, create a simple shot list covering the description of the camera angle, the way the camera is going to move, and the significant action in a specific shot.

2. Choose Previs Format

Next, decide which type of previs is best for your project. If your project is simple, you can use hand-drawn storyboards or photos placed to show the scene. However, if you want to plan timing and camera motion more clearly, create an animatic. Know that an animatic is like a video version of your storyboard, where you arrange each shot with timing and sometimes sound in software like Premiere or After Effects.

3. Build Rough Visuals

Next, draw simple images of how your scenes will appear; they need not be elaborate or flawless. Characters and sets can be depicted using very simple shapes in 2D or 3D. The objective is to concentrate on each shot frame and the movement that the camera is going to follow.

If your scene includes complex camera movements or special effects, you can use 3D applications to test and refine them easily. This includes Blender, Maya, or Previs Pro to test such movements without much effort.

4. Add Timing and Sound

In making previsualization, have all your shots in sequence and assign each shot an approximate duration. This assists you in the pace, flow, and rhythm of the scene. To test how the sequence will feel, you can also add temporary dialogue, sound effects, or music, even though everything remains rough and not final.

5. Review and Refine

Finally, observe the previs, either alone or with your team, and identify areas for improvement. You may need to adjust shot sizes, camera angles, or timing until the sequence becomes clear and easy to follow. As it feels right, use the finished previs as a guide during production. Thus, it will help you follow the short list, plan your shooting schedule, and better understand the budget.

Part 5. Applications of Previsualization

After having the basics of previsualization, here is how you can apply this process in several fields to plan, test, and improve visual projects:

1. Film Production: Previs may be used by directors to design complicated shots, camera movements, and placement of actors. There, the lens choice, framing, and blocking will assist in making scenes clear and efficient prior to shooting.

2. Animation: As an animator, you can rely on previs to set character movements, scene layout, and timing. With rough 3D models and keyframes, you can test flow and pace before the final animation.

3. Photography: Photographers can also find this solution useful and can plan a lighting setup, angles, and the position of the subjects. Moreover, depth of field, camera positioning, and composition guides warrant the intended visual output.

4. Advertising & Commercials: Ad teams can use previs to design camera paths, story flow, and visual storytelling. Storyboards, effect placeholders, and timing charts will help create precise, appealing commercials.

5. Virtual Production & VFX: VFX teams use previs to coordinate stunts, CGI, and virtual set interactions. Thus, camera tracking, green screen layouts, lens simulation, and motion capture ensure accurate results.

Part 6. FAQs about Previsualization

1. What does a previsualization artist do?

A previsualization artist plans scenes and shots to show directors the visual story clearly. They create rough models, storyboards, or animatics so teams can see and adjust each scene.

2. Can I use motion capture technology with previsualization software?

Yes, motion capture data can be added to previsualization software to show character movement accurately. It helps test action, stunts, or complex sequences before full animation or live production begins.

3. What are the best software tools for previsualization in filmmaking?

Popular previsualization software includes FrameForge, ShotPro, Blender, and Maya for 3D and storyboard creation. These tools allow directors to plan shots, camera angles, and sequence scenes before actual filming.

Conclusion

To wrap up, previsualization helps creators plan scenes and test shots before production to save time and reduce errors. This article has explained this process in detail with an extensive guide on how to storyboard, animatics, 3D models, and technical data.

For teams working on more complex previs scenes, a render farm like Fox Renderfarm can be a practical option to handle heavy test renders and previews more efficiently.