Neural Rendering Guide: AI Synthesis and 3D Production

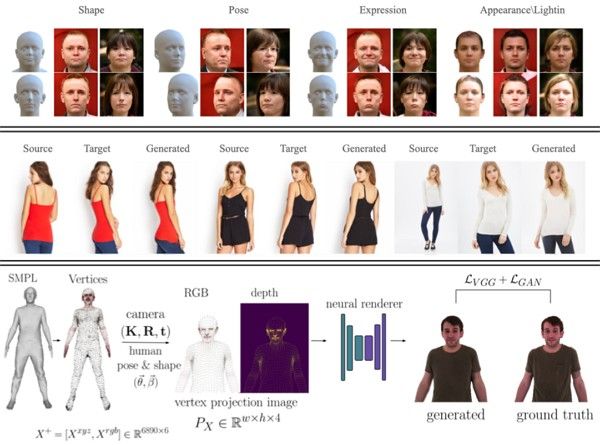

Neural rendering is a new approach in 3D graphics where artificial intelligence is used to generate and enhance visual scenes instead of relying only on traditional math-based rendering methods. This technology allows computers to learn how light, textures, and environments behave, making it possible to create more realistic and efficient graphics.

From RTX-powered neural rendering systems to AI-based shaders that reduce computational load, these techniques are transforming how real-time 3D content is produced. They are especially important in gaming, film production, and virtual environments where performance and visual quality must work together.

In this guide, you will learn what neural rendering is, how it works, and how technologies like NVIDIA’s neural rendering systems and diffusion-based models are shaping the future of 3D production.

Part 1. What is Neural Rendering & How It Works?

Neural rendering is a new way to create 3D images using artificial intelligence rather than relying solely on traditional math and physics. In a standard workflow, a computer calculates every individual light ray bouncing off a surface, a process that is very slow and requires power. To put it simply, the AI learns what a scene or object should look like from various angles and then predicts the pixels to draw the image instantly.

Part 2. 5 Current Uses of Neural Rendering to Know

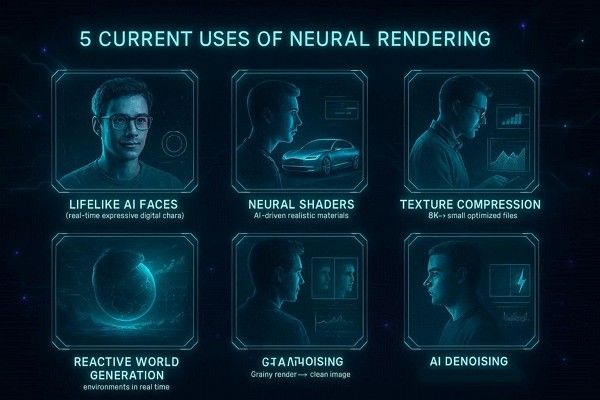

While learning what neural rendering is, it's important to know that this technology is already transforming workflows. Beyond theory, it's now available through several groundbreaking applications available to creators. As for 2026, here are its 5 most common use cases you need to be aware of:

- Lifelike RTX Neural Faces: Digital experts now use AI to generate realistic expressions and skin textures in real-time interactions. Through this, the technology creates believable characters that react to users instantly without needing massive pre-rendered files.

- High-Speed Neural Shaders: Complex materials like car paint or silk are now calculated using AI rather than heavy math. This switch allows artists to achieve cinematic surface quality while keeping their viewport smooth and responsive.

- Smart Texture Compression: AI helps shrink massive 8K texture files into tiny sizes without losing any visual sharpness. Therefore, designers can now fit high-detail models into limited memory, making complex scenes much easier to manage.

- Reactive Diffusion Synthesis: Environments can now grow and change instantly as you move through a virtual 3D space. This process uses live data to paint realistic details and create endless worlds that feel truly alive.

- Real-Time Noise Removal: AI-powered denoising cleans up grainy, raw renders in milliseconds, rather than waiting hours. This allows creators to see a final-quality preview immediately, drastically speeding up the entire artistic workflow.

Part 3. Limits and Challenges of Neural Rendering

While these applications are groundbreaking, implementing AI into a production pipeline comes with a unique set of hurdles that creators must navigate. Understanding the limits of this technology is key to knowing when to stick with traditional methods and when to innovate. So, if you prefer neural rendering, Nvidia, here are 5 pitfalls and challenges facing neural rendering today:

- High Computational and Memory Costs: State-of-the-art models are very heavy, and often require massive GPU memory and bandwidth to evaluate pixels. Thus, this makes it difficult to run complex scenes on mobile devices or low-end PCs without specialized software.

- Long Training and Data Needs: The system requires hours or even days of training on powerful GPUs before they become usable for real-time tasks. Furthermore, you need a massive amount of diverse, high-quality data to prevent the AI from failing in new situations.

- Limited Generalization and Robustness: Models often struggle with scenes of lightning conditions they haven’t seen before, leading to blur and visual artifacts. Hence, complex effects like reflections and transparency remain difficult to replicate without specific, scene-by-scene optimization.

- Workflow and Integration Friction: Current game engines aren’t built for neural methods, making them hard to integrate into existing professional pipelines. In addition, artists find it difficult to edit black-box neural weights compared to traditional, predictable 3D tools.

- Quality Control and Ethical Risks: Neural renders can produce unexpected flicker or misaligned textures, making them hard for developers to diagnose and fix. Additionally, the ability to create deepfake-style content raises serious concerns regarding privacy and authenticity.

Part 4. Why Traditional Rendering Is Still Important

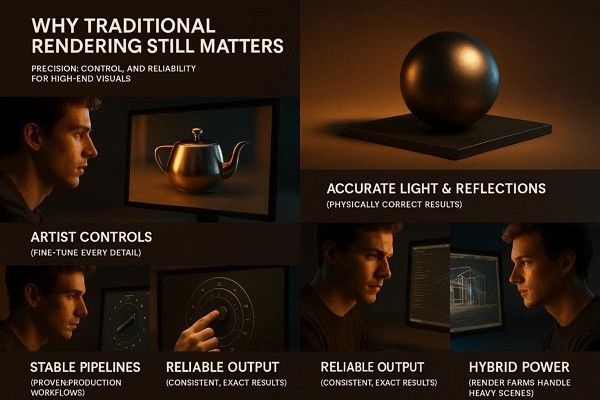

Even though technologies like neural rendering and AI-based shading (such as RTX neural faces) are rapidly evolving, traditional rendering remains the foundation of most professional production pipelines. Here are some cases of traditional rendering that current AI methods still cannot fully replace:

- Predictable Physical Realism: Traditional engines like ray tracing follow strict physical laws to simulate how light bounces, reflects, and refracts. Thus, this ensures that every frame is mathematically accurate and consistent, which is a requirement for high-end file and product design.

- Full Artistic and Technical Control: Artists have fine-grained control over every light, shader, and vertex within their scene. Unlike AI black boxes, traditional tools allow supervisors to turn specific knobs for sampling and noise to get the exact look they want.

- Mature and Battle-Tested Pipelines: The tools and plugins used in traditional rendering have been refined over decades for massive studio teams. This maturity means fewer surprises during big productions, as workflows for asset management and version control are already standardized.

- Reliability for Regulated Work: In fields such as medical imaging or engineering, renderings must be deterministic and explainable for safety and legal reasons. Thus, traditional rendering guarantees that the image is a precise calculation of the data, rather than an AI’s approximation.

- An Additive Hybrid Future: Neural rendering is currently seen as a complement to traditional methods, used primarily for specific tasks such as denoising or upscaling. In this hybrid world, render farms remain vital for processing the heavy, high-resolution base frames that AI cannot yet handle.

However, while traditional rendering is reliable, it is also extremely resource-intensive. High-resolution scenes, complex lighting setups, and animation sequences can take hours or even days to render on a single machine. This often becomes a major bottleneck in production, especially under tight deadlines or large-scale studio workflows.

To address this limitation, many studios rely on cloud-based rendering solutions. Fox Renderfarm is one such render farm that helps offload heavy rendering tasks to distributed GPU and CPU clusters. By processing frames in parallel across thousands of nodes, it significantly reduces render times while maintaining physical accuracy.

In addition, Fox Renderfarm supports industry-standard pipelines with secure, high-speed file transfer powered by Raysync technology, making it suitable for large production environments that require both performance and reliability.

Part 5. When to Use Neural Rendering Today?

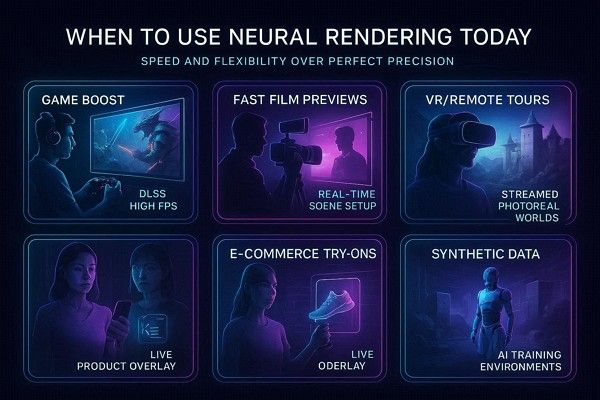

Neural rendering is best suited for scenarios where speed, interactivity, and flexibility are more important than physically accurate results. It is not intended to fully replace traditional rendering pipelines, but rather to complement them in specific use cases.

In practice, neural rendering is most useful in situations that require real-time feedback, rapid iteration, or AI-assisted visual generation. Below are five common scenarios where this approach can be particularly effective:

- Boosting Game Performance: AI tools like DLSS render at lower resolutions and use neural networks to reconstruct a sharp, high FPS 4K image. Hence, this allows you to experience heavy ray tracing or mid-range hardware without sacrificing visual clarity or smoothness.

- Fast Previews in Film: Directors can use neural rendering to get instant, near-final previews of lighting and camera layouts during virtual production rehearsals. Thus, it bridges the gap between rough sketches and the final frame, allowing for faster creative interaction on set.

- Interactive VR and Remote Tours: Neural methods also allow photorealistic 3D environments to be streamed over the internet to headsets or browsers with very low latency. So, it is a perfect solution for remote digital twins or virtual real estate tours where bandwidth is a major constraint.

- Personalization in E-commerce: Retailers can use AI to realistically overlay clothing or furniture onto live camera feeds, for instance, personalized virtual try-ons. Hence, this provides a responsive, custom experience for every user that traditional offline rendering cannot.

- Synthetic Data for AI Training: Neural rendering generates millions of varied, photorealistic images to safely train self-driving cars and robots in rare or dangerous scenarios. This allows developers to simulate extreme weather and traffic faults that would be impossible or risky to capture in reality.

Frequently Asked Questions

1. Is neural rendering the same as a video?

No, it is a live 3D environment that you can move through, but the AI helps draw frames in real-time. Hence, this makes it interactive and dynamic, unlike a pre-rendered video file that stays the same every time.

2. Does neural rendering replace a 3D farm?

Not yet, as traditional rendering is still the only way to get 100% predictable, physically correct results for final films. A professional 3D farm is still needed to process the high-resolution base frames that AI models aren't yet capable of handling.

3. Will AI rendering work on my old computer?

Most modern neural techniques, such as NVIDIA neural rendering tools, require specialized hardware, such as Tensor cores, to run effectively. Without a modern GPU, you may experience significant lag or be unable to run high-resolution AI synthesis at all.

Conclusion

Neural rendering is transforming 3D production by enabling faster real-time synthesis and AI-assisted previews. It improves iteration speed and workflow efficiency, especially in early-stage design.

However, it still has limitations in physical accuracy and final production quality. For this reason, traditional rendering remains essential for cinematic, production-grade results.

In most pipelines, both are used together—neural rendering for speed and traditional rendering for final output. Cloud render farms like Fox Renderfarm support this workflow by handling heavy, high-resolution scenes through scalable GPU and CPU resources.