A Sharing of 3D Scene Reproduction from 'For Honor'

In this article, Fox Renderfarm, the CG industry's leading cloud rendering service provider and render farm, shares a 3D scene produced by a team of students working together, based on the Ubisoft's game "For Honor".

Final result:

1. Analyze Original Artwork and construct Rough Scene

We used a standard PBR production process for this case. In order to accurately restore the scene proportions, all team members conducted on-site inspections in the game. Afterwards, individual tasks were assigned to each person.

To ensure that the proportions of the model are accurate, our team leader simplified the entire scene into basic geometric shapes and assigned these models to individuals as reference for scale.

Rough scene model

2. Making Mid-poly Model

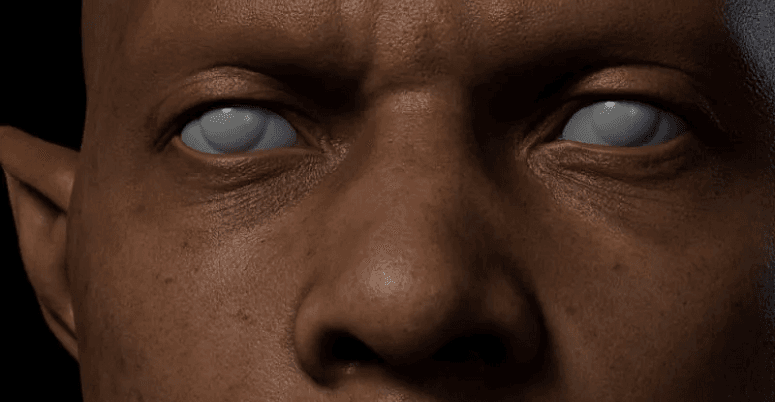

Character sculpture: At the beginning, I was very confused because I had never dealt with character sculpture before. After consulting with my teacher, I got some directions. The idea was to start with a basic human model in ZBrush and pose it, then reduce the polygons and export it as a reference for building the equipment structure on the model in 3ds Max. The head, hands, feet, and equipment were all done separately. As for the head, I adjusted the face using a basic male head model in ZBrush, and created a rough model of the hair and beard using two basic spheres. Because I had no experience with ZBrush sculpting, I had to spend some time every day learning the basics, which made me the slowest member in the group's progress during the entire production process.

Mid-poly model

Small objects: The small objects were not complicated for me. I needed to refine the model structure based on the rough model. It was important to pay attention to the object's topology and always consider adding edges loop in high-poly modeling. During production, it was crucial to ensure that the size of the small objects was consistent with the rough model, otherwise the proportions in the scene would be incorrect. For some small objects, such as wooden planks, locks, and iron rings, it was only necessary to create two or three different models and reuse them repeatedly.

Wall pillar: This part requires the use of four-way continuous patterns, which seamlessly connects all four sides and loops around. The low-poly walls can be directly textured using this method. I made a lot of mistakes at the beginning, but with the guidance of my teacher, I was able to correctly use ZBrush's Deformation -> Offset feature to create the correct four-way continuous patterns.

The mid-poly model is just for transitional purposes, designed to facilitate the production of high-poly models with as much detail as possible. It's important to ensure that the various components can be interspersed rather than being created as a single object, as it would not only be troublesome but also make sculpting difficult.

Mid-poly scene

3. Making High-poly Model

I find it really challenging to sculpt the stone texture of a person. I thought about many complex techniques, but in the end, I realized that simplicity was the key. I approached the sculpture by working on each part separately, starting with the face. I searched for a lot of references, mostly of elderly men, as well as some images of muscles and bones.

Since I wanted to create a stone statue of a person, capturing the subject's characteristics was essential. I pushed down the center of his forehead to give him a serious look. I made each facial feature three-dimensional, and since he appeared to be older, I carved deeper eye sockets and more pronounced cheekbones. The fabric carving was also quite challenging, but after studying and practicing, I got much better at it.

Sculpting high-poly model

Stone and wood: In this part, the team encountered some issues. Specifically, the wood texture appeared unnatural with no variation, and in some cases, the wood grain was even at an angle. Meanwhile, the stone was carved too softly without any layers. The best approach to solve these problems is to observe the small details that we often overlook in daily life, and then be more careful and patient when sculpting. It's essential to consider the intrinsic characteristics of the object and avoid over-sculpting just for the sake of it.

Sculpting small objects

Pillar pattern: With the guidance of our teacher, we learned to use grayscale images to create some decorative effects. By using the correct method, we were able to save a lot of time in the project.

Pillar pattern

High-poly scene

4. Making Low-poly Model

For irregular models such as figurines, eagles, and skulls, we exported their high-poly models and imported them into TopoGun for retopology. The topology was manually sketched using high-poly models, and followed the structure points while maintaining low face counts. Whenever possible, the convex structures were topologized, while small details, such as dents and damage sites, were achieved using normal maps instead of actual topology. It is important to follow the original structure when doing topology! Due to our lack of experience, we ran into various problems during the topology process, but we were gradually progressing. Regular objects were created by adding and subtracting lines from mid-poly models in the early stages.

Topology model

After completing the low-poly models, it was time to split the UV maps. This raised the question of how many textures and what size should be used. Since this was a personal project, we standardized our texture size to 1 m/512 pixels. We aimed to maintain consistent pixel density throughout the entire scene. For the figurines, I used four 4096 pixel textures, while over 30 textures were used for the whole scene. If any member failed to organize the files properly, it would cause difficulties during integration. Therefore, it is crucial to adhere to the standards when submitting the files.

Next was time to bake the normal map. We chose to use Marmoset Toolbag for baking the normal map. Because the topology of the models was already completely matched during the process, there was no need to match the high- and low- poly models again. However, for regular models, the matching process should be done in 3ds Max. Then, separate the high- and low-poly parts and group them before importing them into Marmoset Toolbag for baking.

Marmoset Toolbag makes it convenient to manually edit some flawed normal maps. During baking, we make sure that the wrapper completely wraps around the high-poly models. If the normal map has minor issues, they can be fixed in Photoshop. However, if there are major problems, it's necessary to backtrack and search for the reason, such as whether a smooth group was separated but the UV wasn't disconnected.

5. Making Material

Our approach to material creation is to first identify the object's color and texture, create its volume, add textures, and finally add details. To maintain a consistent material tone across the entire scene, we had one student create the base materials, establish the tone, and then export intelligent material balls for others to use. They could then add texture details that suited their own model structures based on the established tone.

Effective material creation process requires collaboration and communication; working in isolation and obsessing over individual model details while neglecting overall effects and tonal consistency is problematic.

Layering materials can create more intricate effects, so it is advisable to experiment with different combinations.

6. Rendering

We rendered using Unreal Engine 4. As we were new to the software, the team leader learned the software while attempting to adjust the visual effects. When importing files from 3ds Max to Unreal Engine 4, the team leader discovered an Unreal Engine official importer plugin called Unreal Datasmith, which greatly facilitated our work progress by allowing us to import all models into Unreal Engine 4.

The most significant challenge we faced during resource integration was not knowing which map corresponded to which model. Therefore, the team leader requested that each team member partition their own models and maps. For example, if two items shared a map, the map and items had to be screenshotted together, with the size of the map marked. Later we found out that material effects were inconsistent - they were either too dark or too bright, perhaps due to lighting issues.

In Unreal Engine, the team leader made detailed partitions for each person's model maps and material ball files. Different folders were given different colors, making it more efficient to organize and replace files.

Conclusion

The above is our experience sharing of the entire production process. In conclusion, I would like to say that the process is more important than the outcome. Due to our lack of experience, there were significant differences between our work and our desired results. However, we gained much from this process. We learned about teamwork, gained technical knowledge, and understood many life lessons. The scene project production process was a valuable lesson in our lives. Therefore, I just want to say that we are all the best!

Source: Thepoly